Continuous security monitoring (CSM) is a term that frequently comes up when discussing network...

When assessing the options available, it can be difficult to understand the nuances between various...

Tl:DR: A Large U.S. university lacked sufficient visibility into a large segment of its environment...

It is easy to confuse intrusion detection systems (IDS) with intrusion prevention systems (IPS),...

“By failing to prepare, you are preparing to fail.” - Benjamin Franklin

No conversation about intrusion detection systems is complete without also taking time to look at...

One of the most common questions people have about intrusion detection systems (IDS) is where to...

TL;DR: An American school district needed to monitor over 5000 school-owned student devices, making...

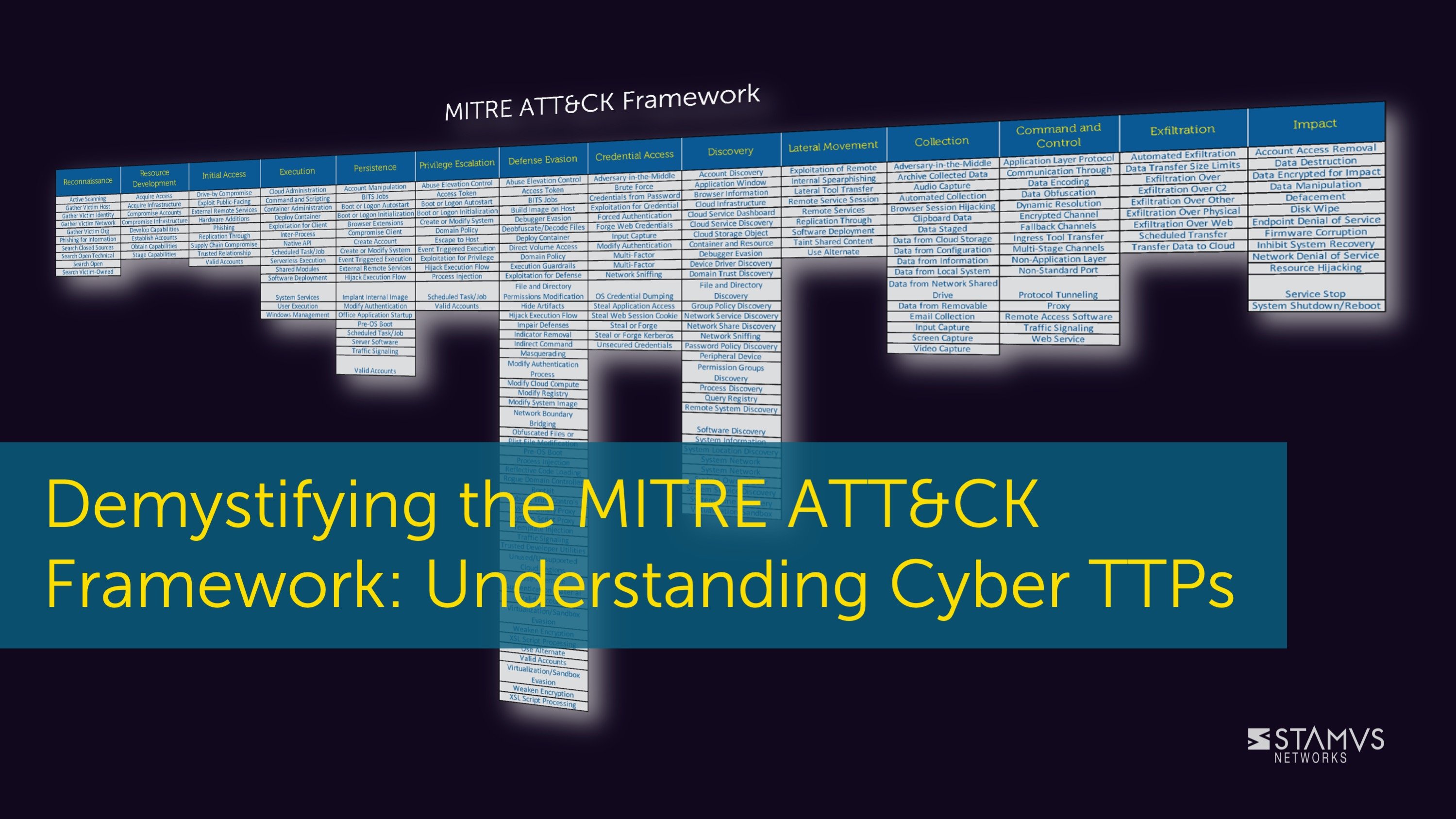

To create an effective cyber security strategy, organizations must first have a good understanding...

You might be aware that intrusion detection systems (IDS) are incredibly effective ways to identify...

TL;DR: A European managed security service provider seeking to launch an MDR service chose Stamus...

In this blog post, we delve into the key requirements of network detection and response (NDR),...

For those new to the world of intrusion detection systems (IDS), you may be unaware that there are...

Network detection and response (NDR) is a critical component of a comprehensive cyber defense...

Network Detection and Response (NDR) is a highly capable cyber security solution for proactively...

For those new to open-source network security tools, learning the differences in various options...

Did you know there are actually several different IDS detection types used by different intrusion...

Network detection and response (NDR) plays a vital role in many organization’s cyber security...

Recent changes to the behavior of major browsers have rendered the popular JA3 fingerprinting...

One cannot compare Suricata vs Zeek without also comparing these tools to the popular Snort. While...

Intrusion detection systems are an incredibly popular first line of defense for many organizations...

Cybersecurity is always changing, and as new product categories continuously enter the market it is...

When discussing open-source intrusion detection tools, only three names routinely appear as IDS...

For absolute beginners in the world of intrusion detection systems (IDS), it is important to know...

Understanding the benefits of network detection and response (NDR) can be difficult if you are...

One cannot talk about intrusion detection systems (IDS) without also discussing intrusion...

Before beginning any sort of threat hunt, it is important to consider the tools you are using. This...

If you’ve been keeping up to date with the Stamus Networks blog, then you are likely well...

This week we announced that an important new software release Update 39.1 (or “U39.1”) for our...

Deciding between open-source network security tools can be a difficult task, but once you’ve...

Choosing which intrusion detection system (IDS) is hard enough, but it gets even more difficult...

At Stamus Networks, we are wrapping up another great year, so it is time to again review the news,...

Network detection and response (NDR) is becoming an increasingly popular topic in cyber security....

No conversation about open-source intrusion detection tools is complete without the inclusion of ...

Like firewalls, intrusion detection systems (IDS) are incredibly popular early lines of defense for...

This is a follow-up to our third blog on hunting using the publicly available Newly Registered...

Network Detection and Response (NDR) is an incredibly effective threat detection and response...

Many people mix up the different types of intrusion detection systems (IDS), but it is very...

Network detection and response (NDR) is a growing product category in cybersecurity. If you are...

When it comes to open-source intrusion detection tools, there are only three systems that any...

Before deciding on whether or not an intrusion detection system (IDS) might be right for your...

Network Detection and Response (NDR) comes with several advantages for organizations looking to...

This is a follow-up to our second blog on hunting using the publicly available Newly Registered...

Choosing between the various options for open-source intrusion detection tools can be a difficult...

Understanding the nuances of different types of intrusion detection systems (IDS) can be tricky,...

This is a follow-up to our first blog on hunting using the publicly available Newly Registered...

Gartner is a highly respected voice when it comes to recommendations on cybersecurity products....

When discussing intrusion detection systems (IDS), or more specifically network intrusion detection...

Network detection and response (NDR) has been steadily increasing in popularity as organizations...

Cozy Bear — also known as APT29, CozyCar, CozyDuke, and others — is a familiar name to security...

Many professionals in cybersecurity often look to research firm Gartner for insights into new...

In aprevious blog post, we announced the release of Open NRD from Stamus Networks - a set of threat...

In cyber security, we commonly talk about different product categories like intrusion detection...

Network detection and response (NDR)is beginning to play a larger role in many organizations’...

In an era of rapidly advancing technology and digital transformation, the realm of cybersecurity is...

Suricata vs Snort? Choosing between these two incredibly popular open-source intrusion detection...

It is easy to get confused about the various types of intrusion detection system (IDS) examples,...

In the early stages of learning about Network detection and response (NDR), it can be difficult to...

This article describes the details of the new Open NRD threat intelligence feeds provided by Stamus...

Comparing Suricata vs Snort isn’t always easy. Both options are incredibly popular intrusion...

Before making any decisions on using an intrusion detection system (IDS), it is vitally important...

In aprevious blog post, we compiled a number of useful JQ command routines for fast malware PCAP...

Network detection and response (NDR) is still a newer product category in cyber security, and as a...

For a large organization, keeping track of numerous security systems or internal security policies...

For those new to network detection and response (NDR), it can be confusing to understand the...

Previously, we compiled a number of useful JQ command routines for fast malware PCAP network...

Operating since 2008, the shadowy figure of Fancy Bear has emerged as a formidable force in the...

The cybersecurity landscape is constantly changing, with threat actors always looking for new...

In aprevious blog post, we compiled a number of useful JQ command routines for fast malware PCAP...

As cloud and hybrid environment adoption grows, so does the need for network detection and response...

If you have ever worked for a large enterprise, then you may be familiar with the term “enterprise...

Network detection and response (NDR) could be the answer your organization is looking for to solve...

Network detection and response (NDR) has been quickly gaining ground as a respected cyber security...

When a threat researcher is investigating malware behavior and traces on the network, they need a...

Back in 2022, I did a Suricon presentation titled Jupyter Playbooks for Suricata. This led into a...

In our past series, “Threat! What Threats?” we covered the topic of phishing in a generic way, but...

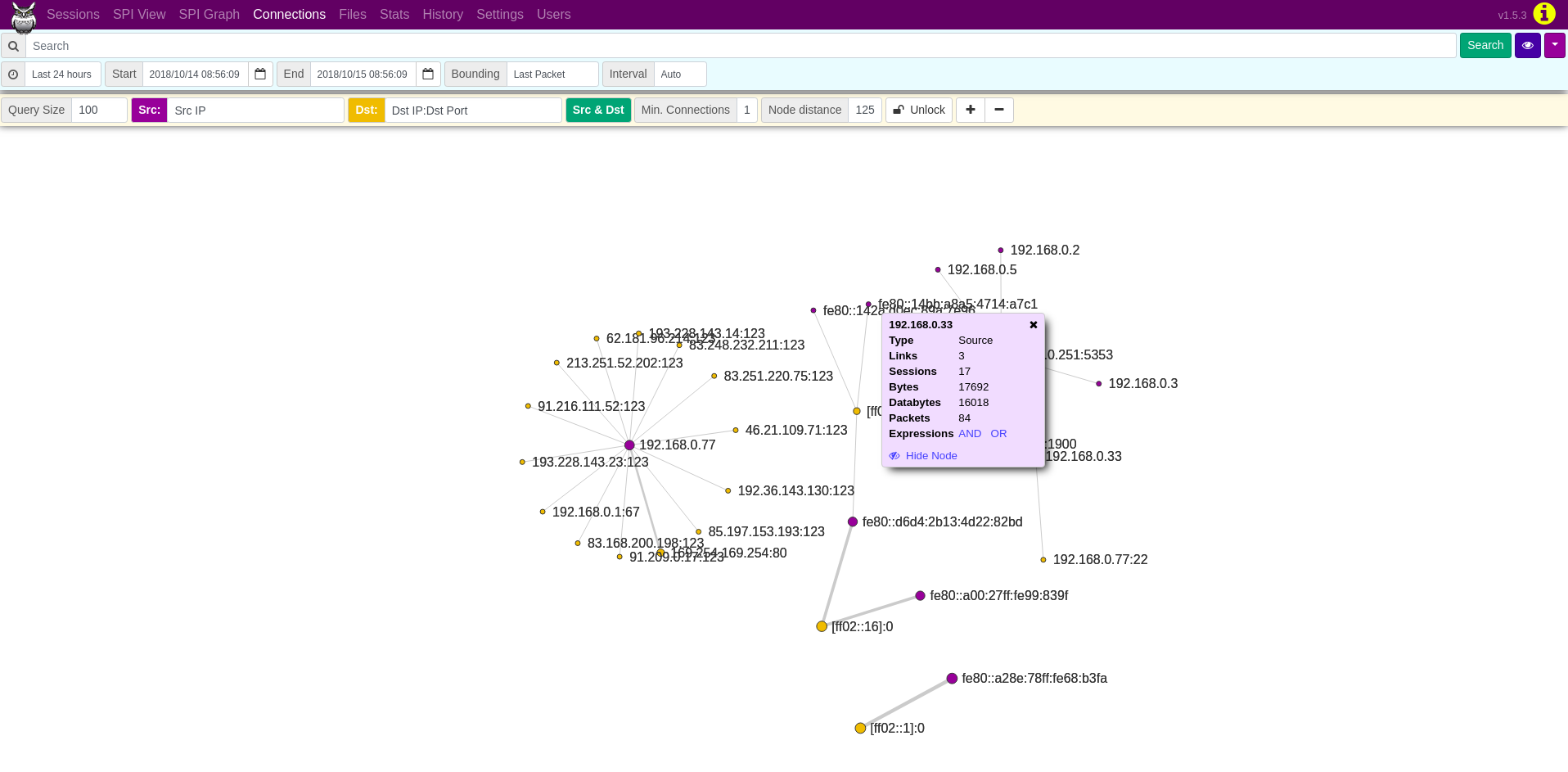

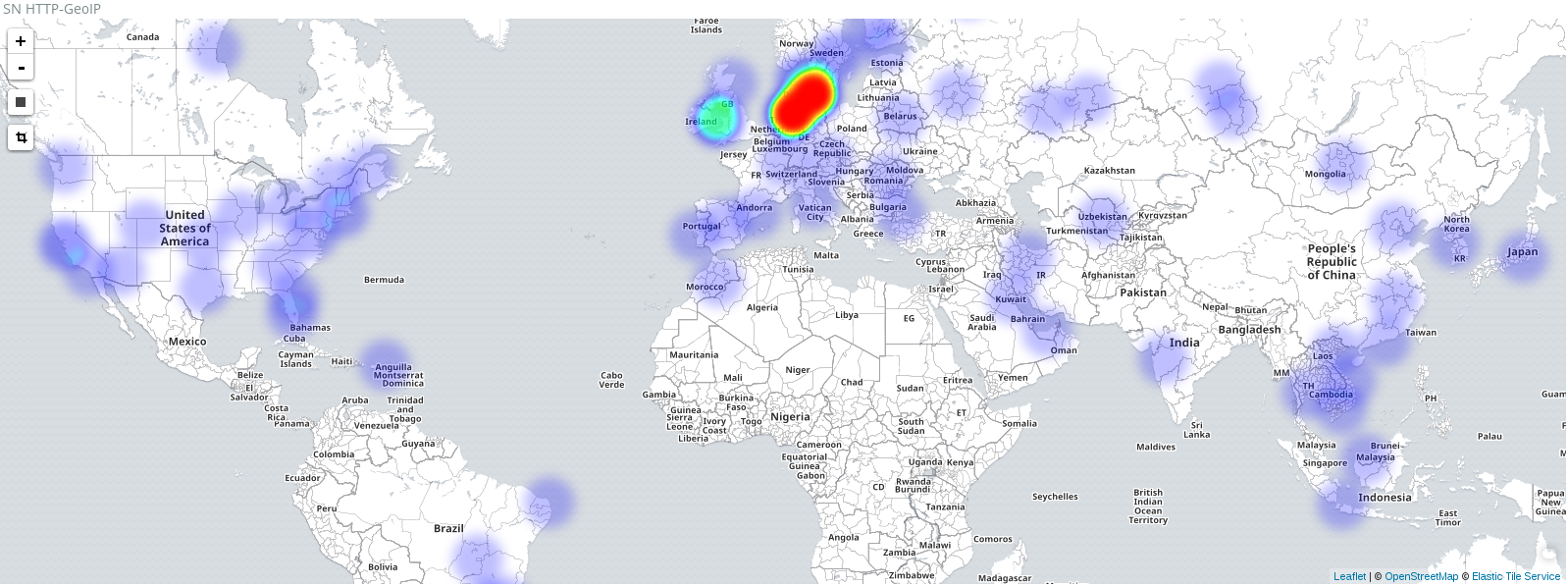

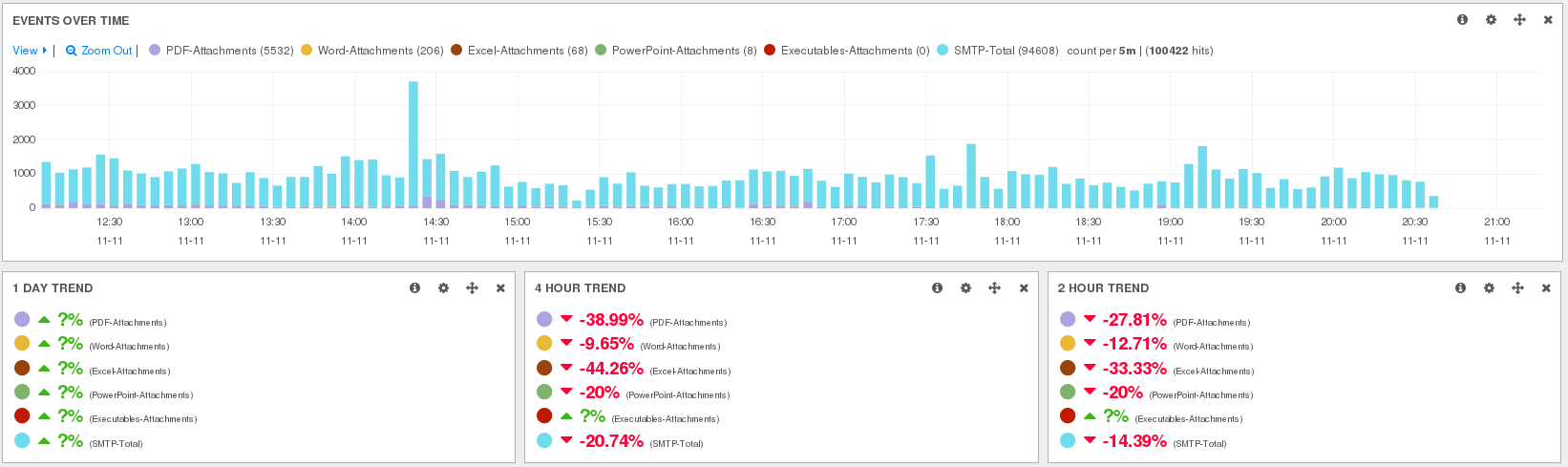

Visualizing network security logs or data is a crucial aspect of effectively analyzing and...

Today I am thrilled to share some incredible news. It is with great excitement and pride that I...

This week’s guided threat hunting blog focuses on hunting for high-entropy NRD (newly registered...

Every day, new Internet domains are registered through the Domain Name System (DNS) as a natural...

One of the unique innovations in the Stamus Security Platform is the feature known as Declaration...

Have you ever counted how many computer devices, smart IoT gadgets, TV’s, kitchen appliances,...

Yesterday (18-July-2023) the OISF announced the general availability of Suricata version 7. It’s...

When an organization wants to learn more about the tactics, techniques, and procedures (TTP) used...

In the past few blog posts, we have discussed at length the importance of creating a comprehensive...

The cyber kill chain is a widely-used framework for tracking the stages of a cyber attack on an...

On 15-June-2023 the OISF announced a new release of Suricata (6.0.13) which fixes a potential...

Endpoint security is one of the most common cybersecurity practices used by organizations today....

Network security plays a crucial role in today's digital landscape as it safeguards sensitive...

Cyber threats are becoming increasingly sophisticated and pervasive, causing organizations to place...

Are you looking to improve your threat hunting and network based forensic analysis skills with...

Threat hunting is a common practice for many mature security organizations, but it can be time...

Writing Suricata rules has never been easier or faster since the release of the Suricata Language...

Earlier this week, we introduced the second set of visualizations provided by the SN-Hunt-1 Kibana...

Last week, we introduced the first set of visualizations provided by the SN-Hunt-1 Kibana dashboard...

This is the third post in a series based on my Suricon 2022 talk “Jupyter Playbooks for Suricata”....

Today, we announced the general availability of Update 39 (U39) - the latest release of the Stamus...

Recently, we released a blog post detailing how you can solve the Unit 42 Wireshark quiz for...

A couple of weeks ago, we covered how Stamus Security Platform (SSP) users can harness the power of...

This blog describes how to solve the Unit 42 Wireshark quiz for January 2023 with SELKS instead of...

Stamus Security Platform (SSP) users can now integrate the Malware Information Sharing Platform...

Intrusion Detection Systems (IDS) can be powerful threat detection tools, but IDS users frequently...

This is the second post in a series that will be based on my Suricon 2022 talk “Jupyter Playbooks...

In a recent conversation, one of our customers shared their concerns about the use of ChatGPT in...

This blog describes the steps Stamus Networks customers may take to determine if any of your...

This is the first post in a series that will be based on my Suricon 2022 talk “Jupyter Playbooks...

Because cybersecurity teams face numerous threats from bad actors that are continually devising new...

When it comes to cyber threats, we understand that a threat to one organization can quickly become...

This week’s guided threat hunting blog focuses on verifying a policy enforcement of domain...

Maintaining an effective security posture is difficult enough for any organization. But for those...

A while back I wrote a blog post about a packet filtering subcommand I implemented into GopherCAP....

As we celebrate the beginning of another new year, we’d like to take a glimpse back at the news,...

It is not uncommon to see executable file transfers within an organization. However, it is...

BlackHat Europe 2022 was the last conference of an eventful year for our team at Stamus Networks....

2022 is coming to an end, and as we wrap up another great year at Stamus Networks I wanted to take...

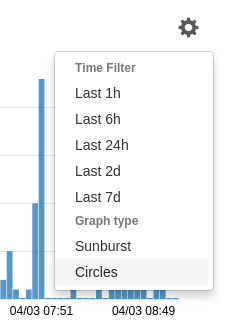

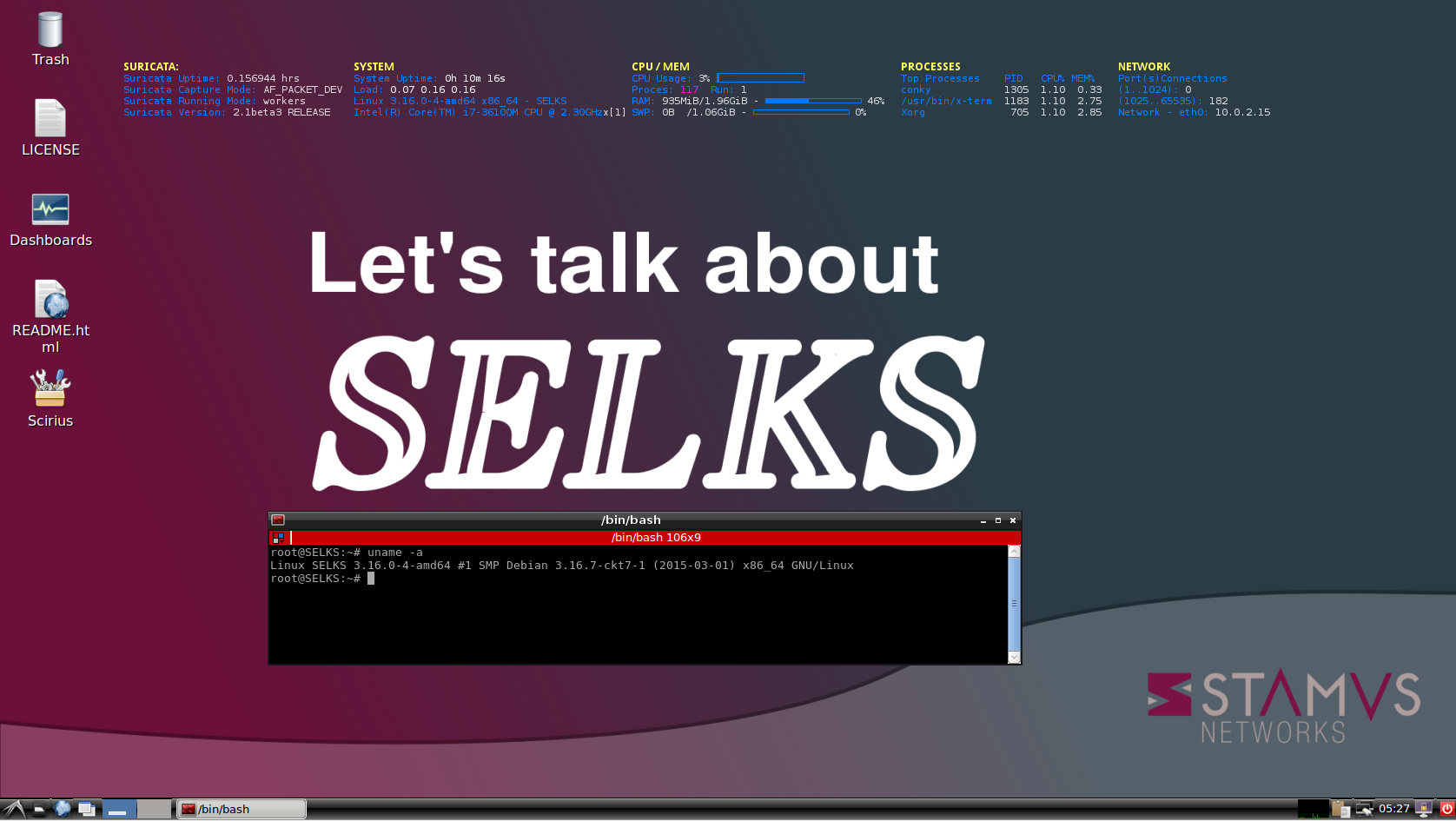

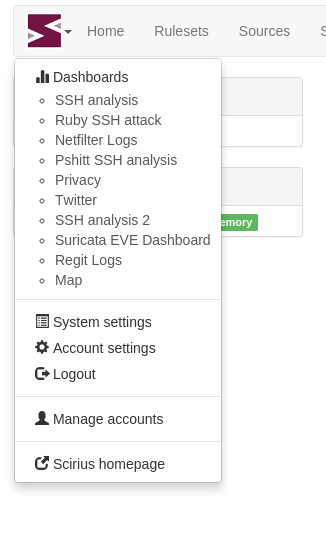

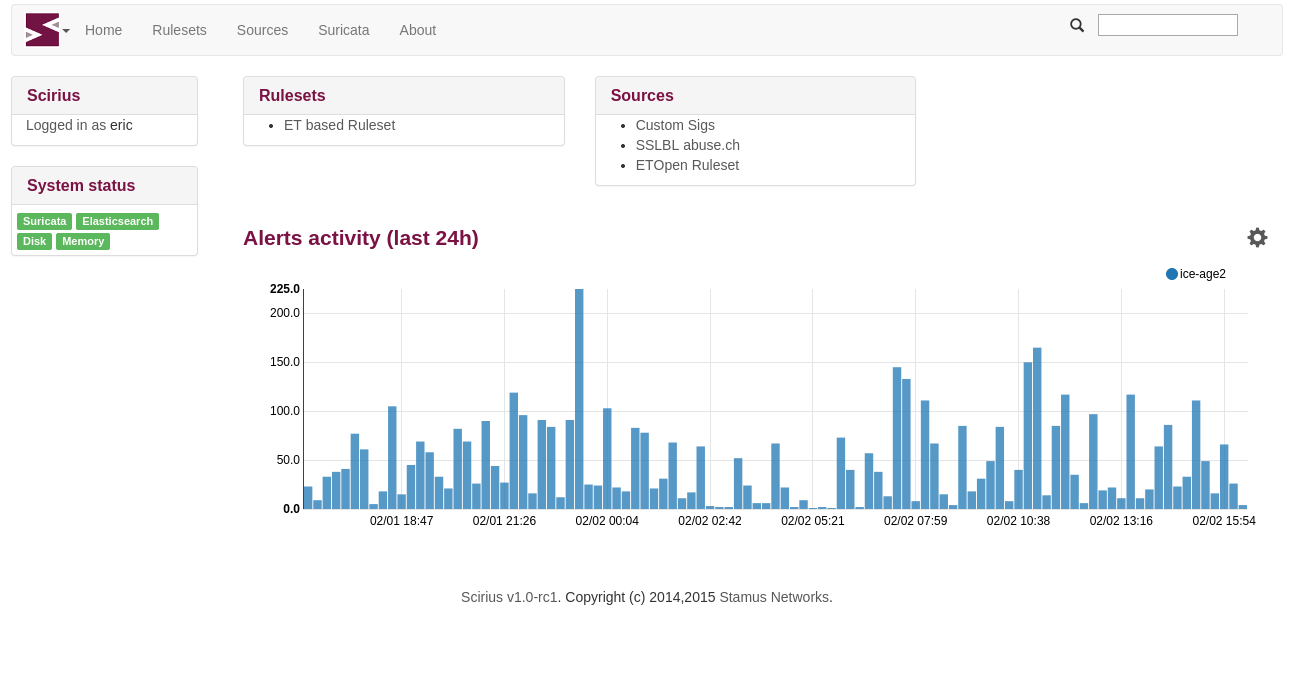

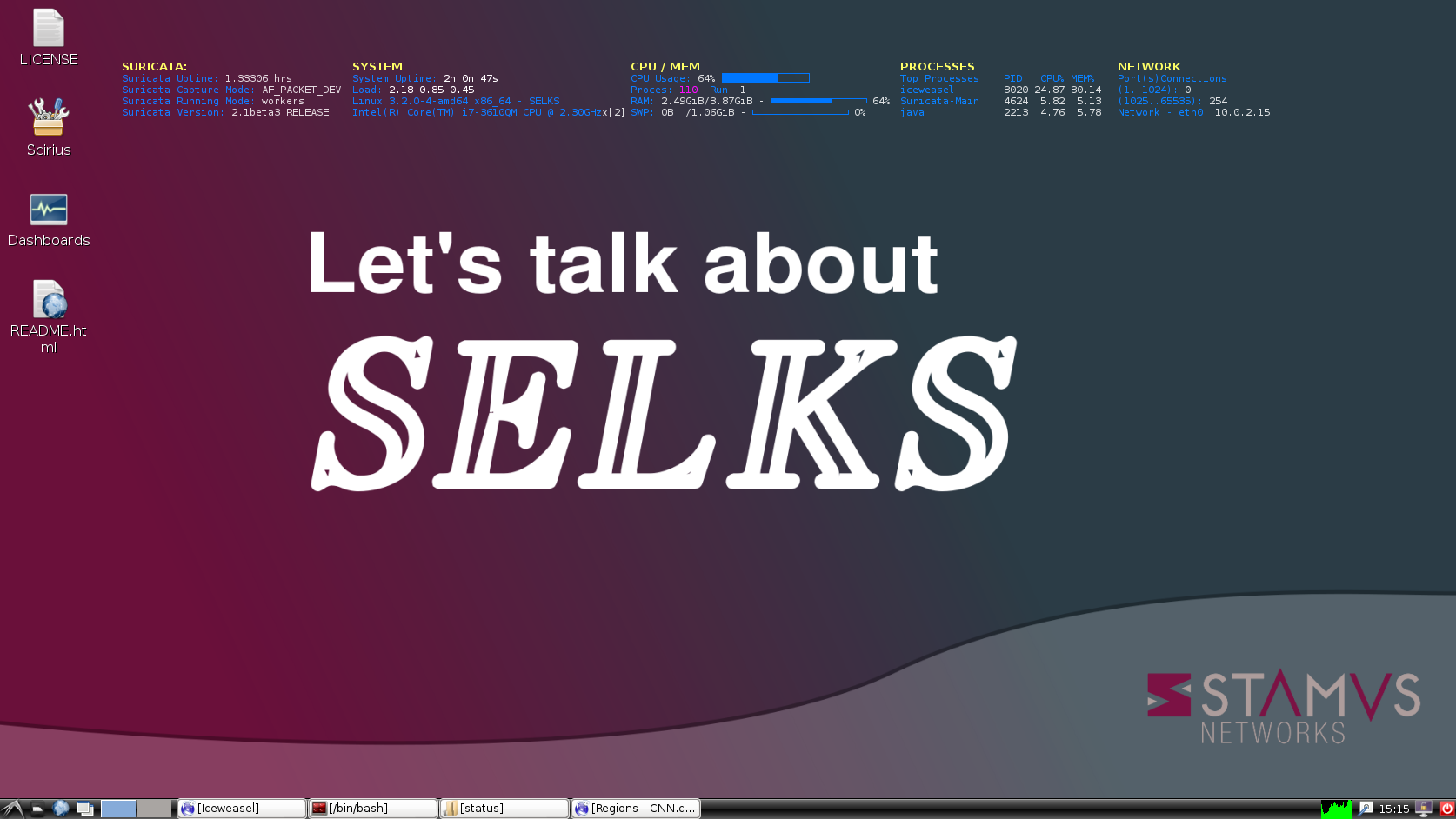

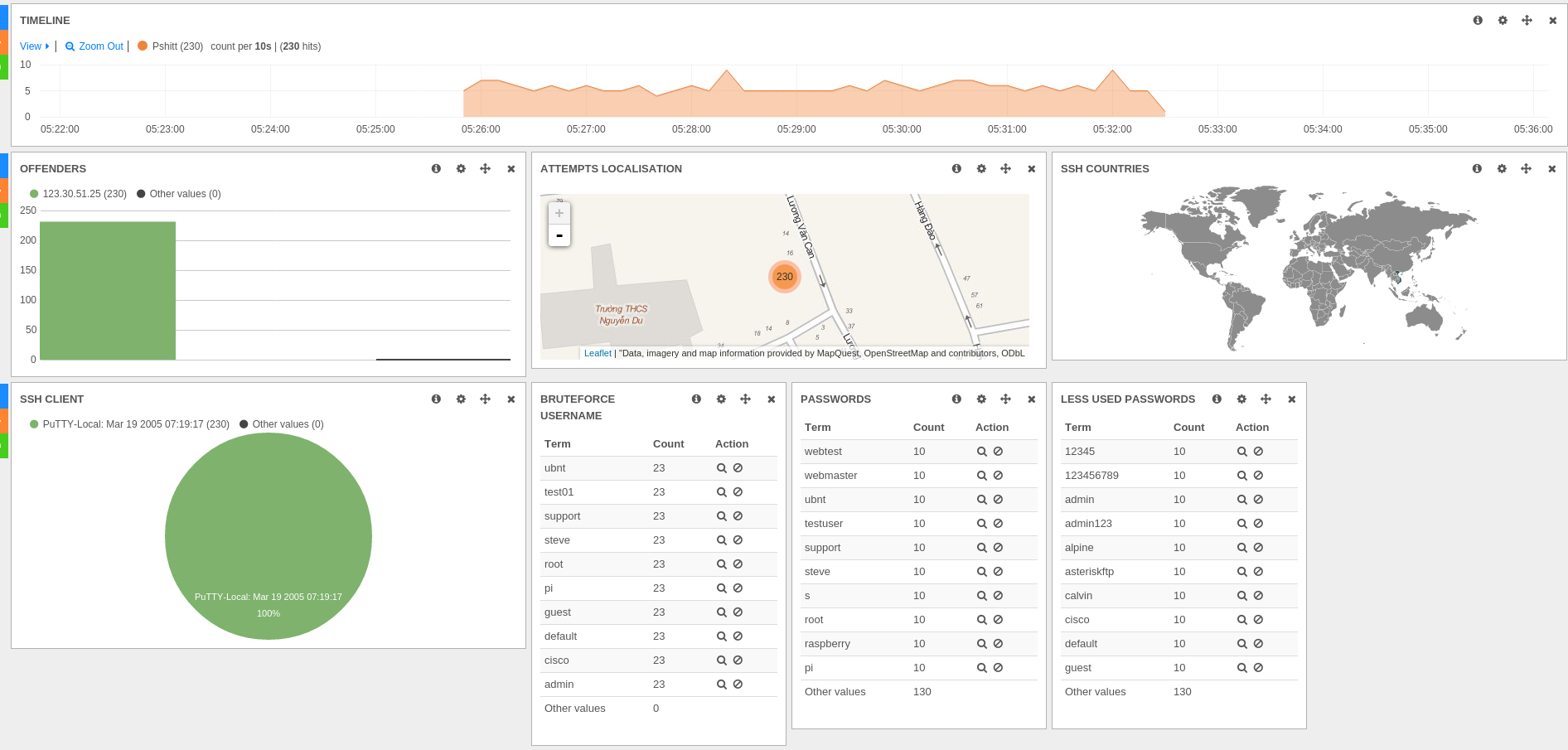

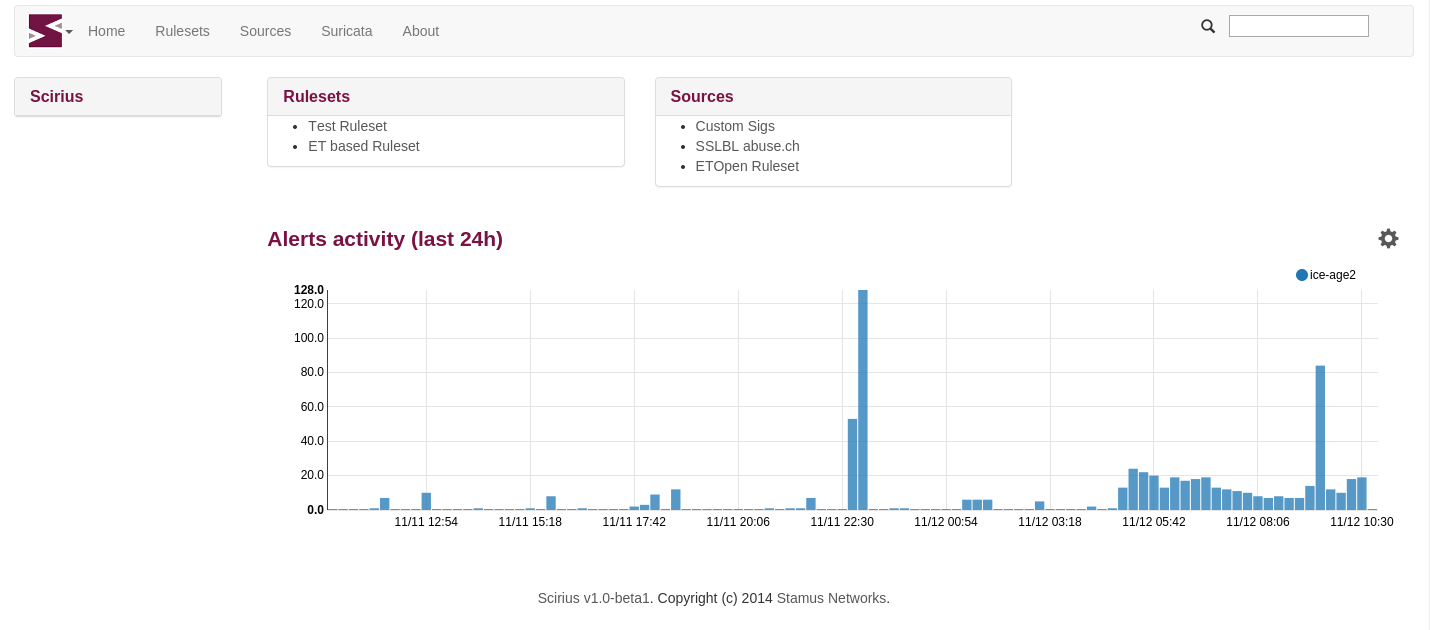

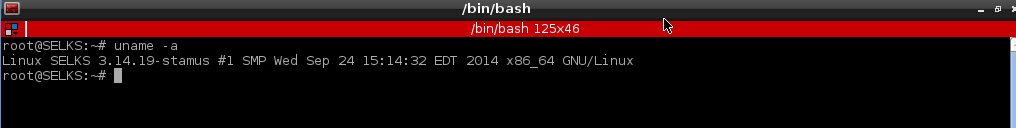

SELKS is a turnkey Suricata-based IDS/IPS/NSM ecosystem that combines several free, open-source...

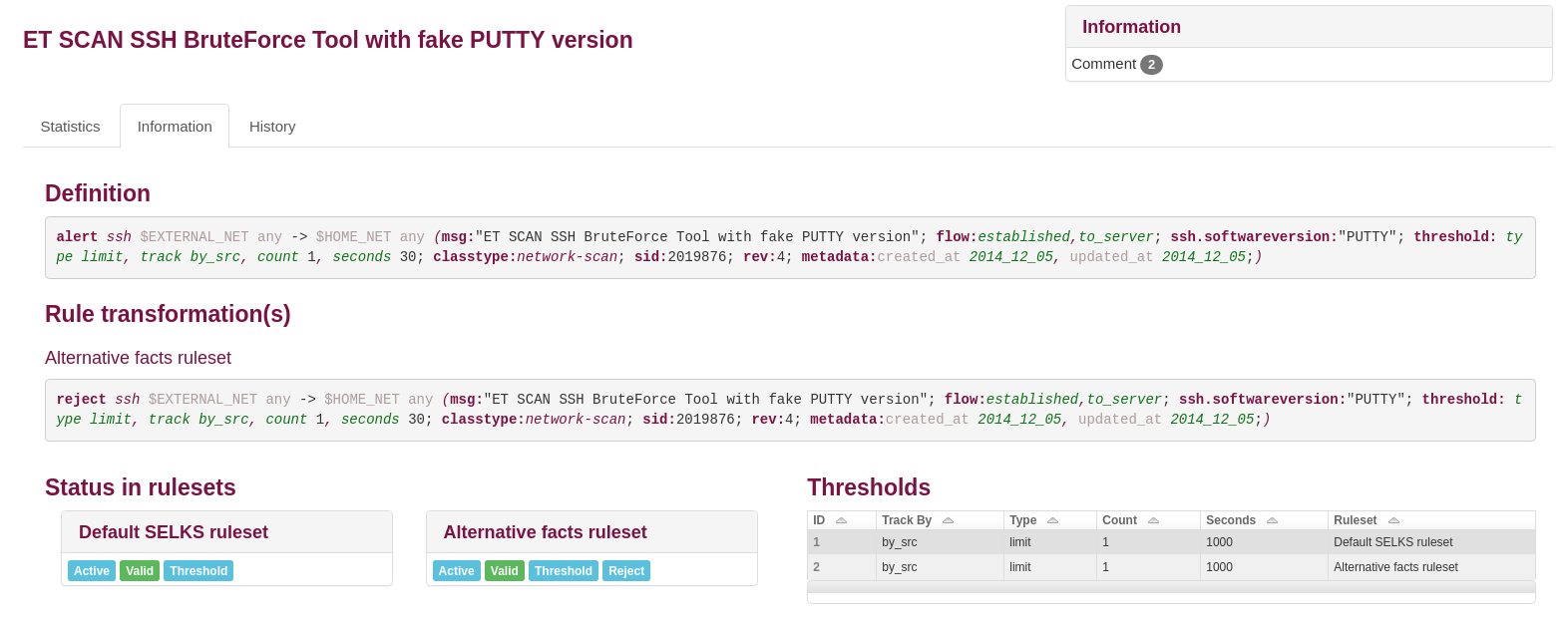

When you already know the specific attacks faced by your organization, then the basic detection...

Punycode domains have traditionally been used by malware actors in phishing campaigns. These...

Just a few weeks after our last event, Suricon 2022, Stamus Networks is heading off to London for...

The latest version (1.0.1) of the Stamus App for Splunk adds TLS cipher suite analysis. Conducting...

Intrusion detection systems (IDS) function incredibly well when it comes to making signature based...

Last week our team was in Athens for the biggest Suricata conference this year - Suricon 2022. The...

As we celebrate the first week after launching our new book “The Security Analyst’s Guide to...

When you see a domain request from a user/client to a non-local or otherwise unfamiliar or...

This blog describes the steps Stamus Networks customers may take to determine if any of your...

TL;DR

Stamus Networks uses OpenSSL in the Stamus Security Platform (SSP) as well as our open source

Non-local domain requests from the user/client network could signal trouble for an organization....

Each year, Suricon attracts visitors from all over the world for three days of knowledge sharing...

DNS over HTTPS (DoH) is a network protocol used to protect the data and privacy of users by...

Command-and-control (C2) attacks are bad news for any organization. Attackers use C2 servers to...

Plain text executables (such as those downloaded from a PowerShell user agent) are often seen on...

Intrusion detection systems (IDS) have proven to be a highly effective and commonly used method of...

This week in our series on guided threat hunting, we are focusing on locating internal use of...

This week’s guided threat hunting blog focuses on hunting for foreign domain infrastructure usage...

This week’s guided threat hunting blog focuses on hunting for Let’s encrypt certificates that were...

In this week’s guided threat hunting blog, we will focus on hunting for Let’s Encrypt certificates...

In this week’s guided threat hunting blog, we focus on using Stamus Security Platform to identify...

This week’s threat detection blog dives deeper into a common type of malware, remote access trojans...

In this week’s guided threat hunting blog, we focus on using Stamus Security Platform to uncover...

In this week’s threat detection blog, we will be reviewing a financially-motivated threat that is...

This week’s guided threat hunting blog focuses on a specific policy violation - the use of...

This week we are taking a closer look at Shadow IT, which is the use of information technology by...

This week’s guided threat hunting blog focuses on policy violations; specifically, violations...

For week 2 of our series on guided threat hunting, we will be reviewing a hunting technique to...

Last week Stamus Networks participated in BlackHat USA 2022, an international cybersecurity...

So, what’s next? You’ve had a successful hunt, uncovered some type of threat or anomalous behavior...

In addition to deploying advanced detection technologies, many security teams make threat hunting...

Stamus Security Platform is loaded with features that help security teams leverage network traffic...

Phishing is commonly regarded as the most common and effective way attackers can gain access into a...

In this article, we will review one of the most important and critical phases on the cyber kill...

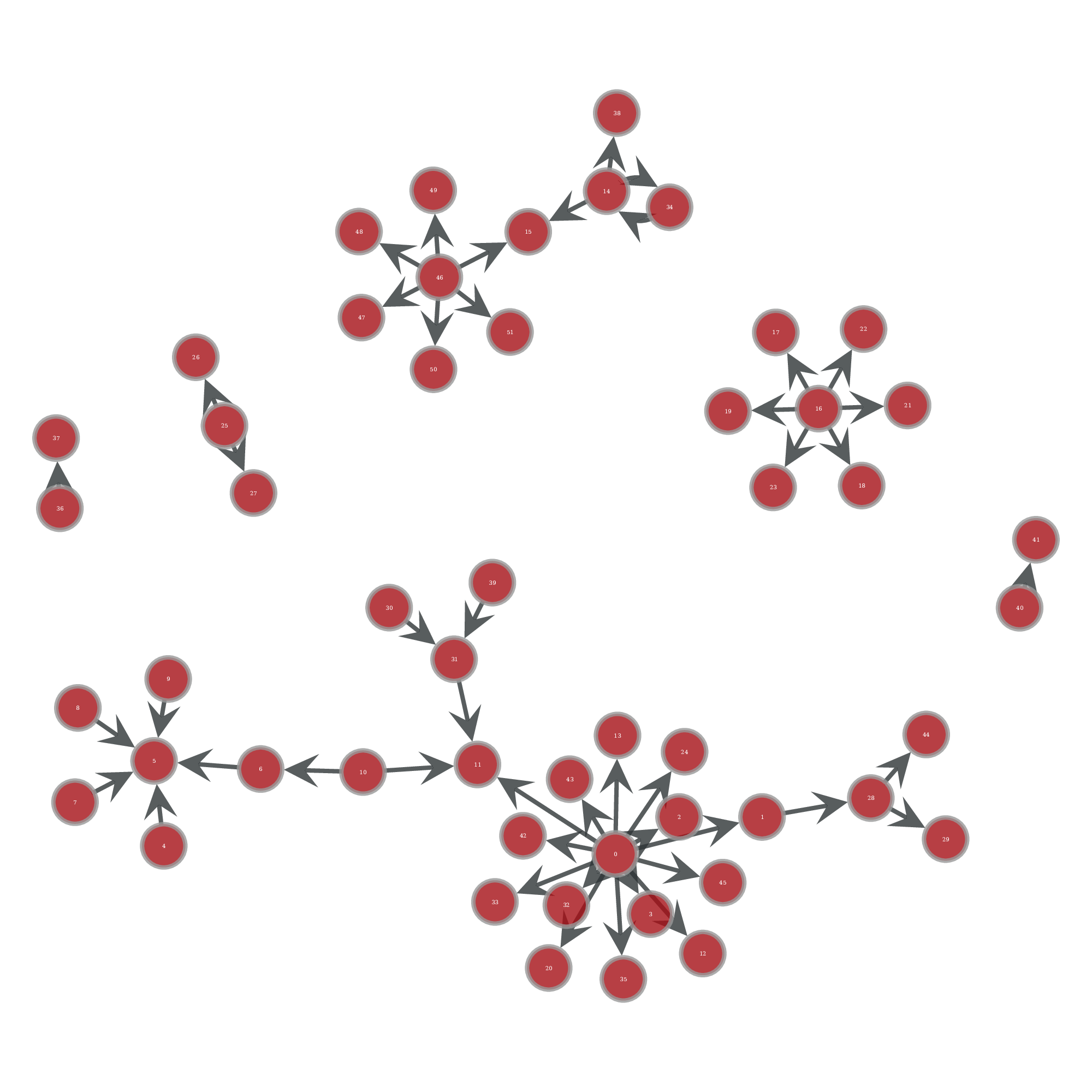

One of the first network-related indications of a botnet or peer-to-peer (P2P) malware infection is...

In this article I want to highlight one of the tactics used by malicious actors to move within your...

In the first article of this series -- Threats! What Threats? -- I mentioned that my colleague,...

When the leadership team at Stamus Networks sat down to discuss our core principles we had to...

When a company decides to capture its core principles, it is important to set expectations on how...

In this series of articles we share hands-on experience from active hunts in the real world. We...

When the leadership team at Stamus Networks got together to capture the core principles of our...

In developing our core principles, the leadership team at Stamus Networks discussed the way we view...

Trust is the foundation of any working relationship. Without it, two organizations cannot amicably...

The world of cybersecurity is rapidly changing and enterprises have to quickly adapt in order to...

RSA Conference San Francisco is back in June 2022 and we are excited to once again be a part of one...

Successful businesses need to maintain a transparent framework which guides their daily practices....

The International Cybersecurity Forum (FIC) is an annual event focused on the operational...

When someone sees a great product, the reaction is often the same. Customers frequently consider...

Today I want to give you a brief tour of what’s new in Update 38 of the Stamus Security Platform...

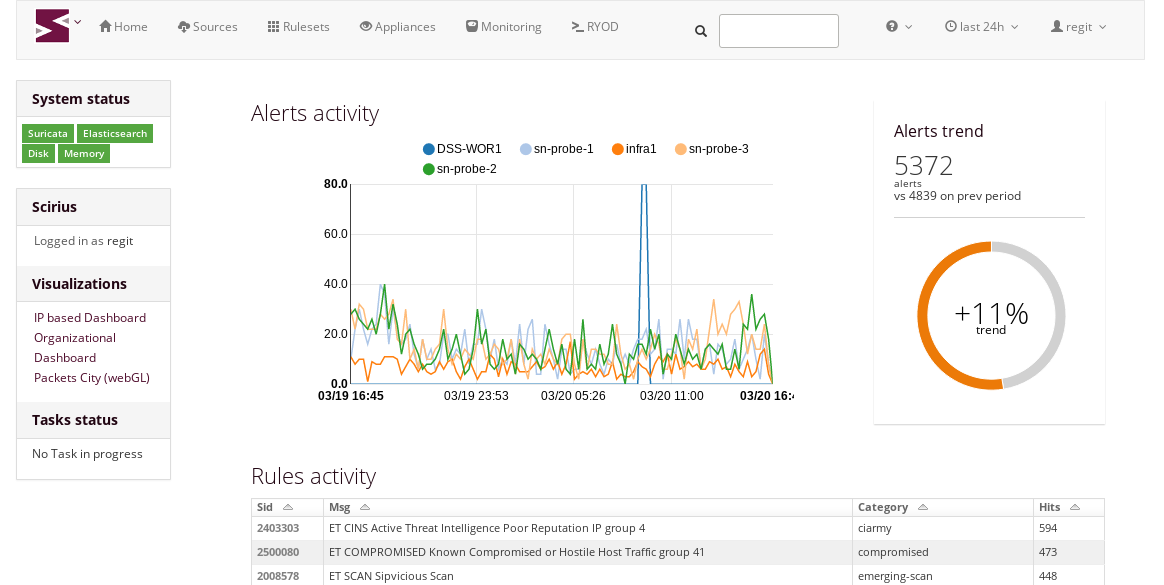

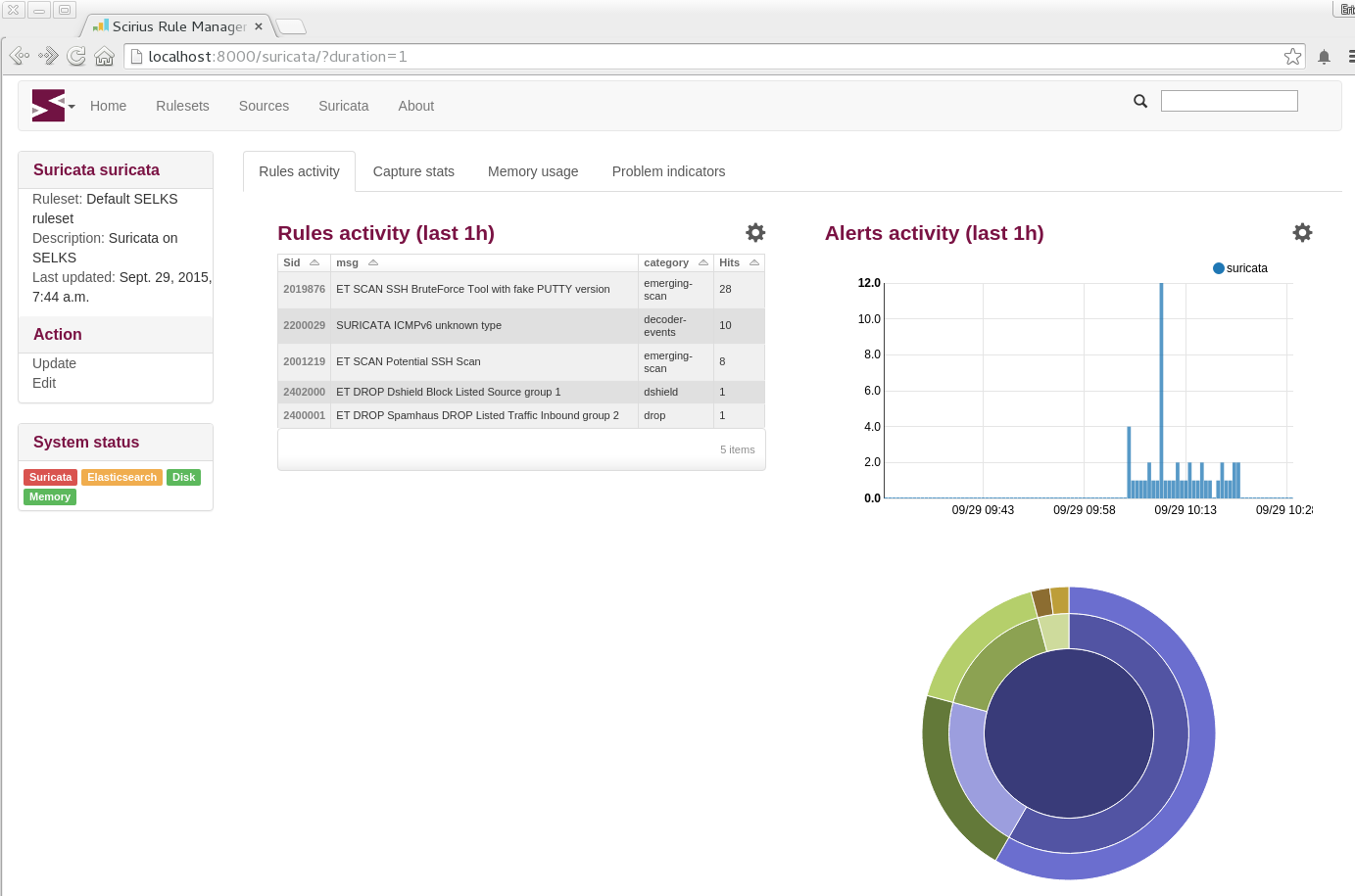

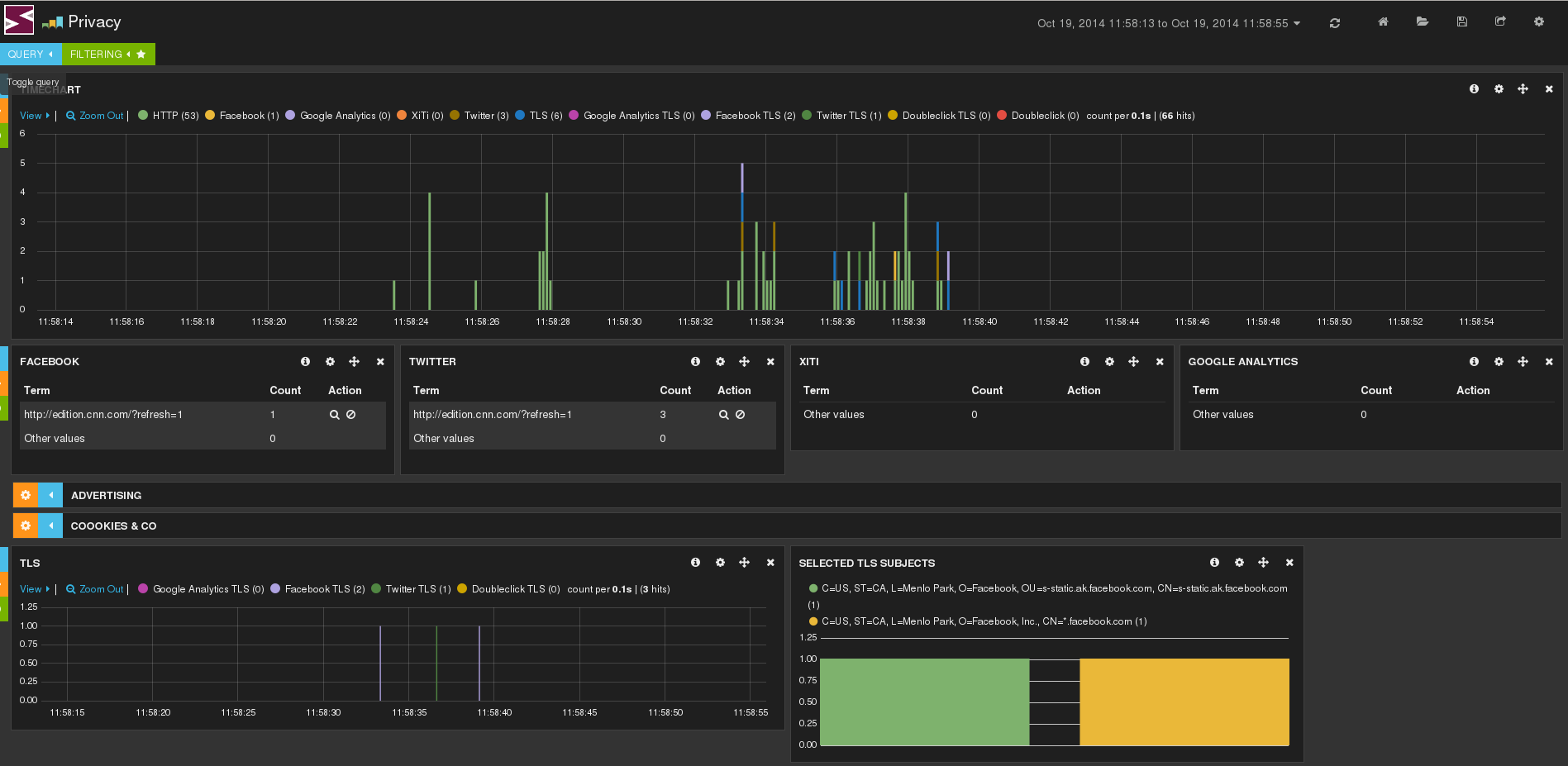

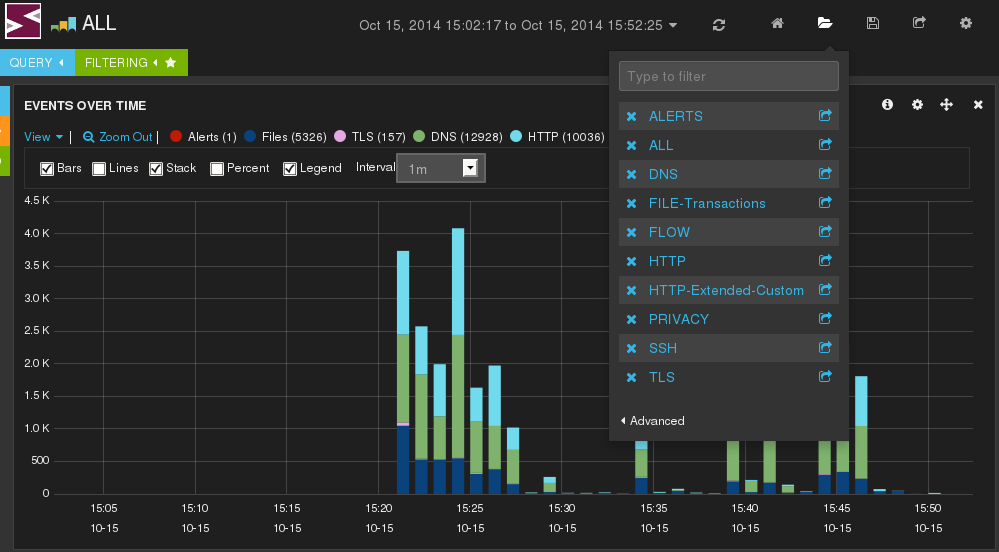

Perhaps the most exciting thing about the release of SELKS 7 is the various practical applications...

This series introduces SELKS 7, the latest update to the free, open-source, turn-key Suricata based...

In this series, you will get an overview of the SELKS 7 platform, the new updates and functionality...

Existing systems that aggregate network security alerts and metadata do not properly detect and...

With two Cyber Security Summits already behind us, we are ready for the next one. On 7 April 2022,...

On 25 March 2022, my colleague Ed Mohr and I will be attending the Cyber Security Summit in...

In the first article of this series –Threats! What Threats? – I mentioned that my colleague, Steve...

We talk often about “threats” and “threat detection” in our marketing materials and in discussions...

Re-Introduction to PCAP Replay and GopherCAP

A while back we introduced GopherCAP, a simple tool...

This week my colleagues Phil Owens, Charlie Provenza and I will be attending and sponsoring our...

In this series of articles, we explore a set of use cases that we have encountered in real-world...

Security monitoring is perhaps the least discussed element of a Zero Trust strategy

Over the past...

In the previous article of the “Feature Spotlight” series, we discussed how to pivot from IDS alert...

Sometimes, even after extensive training, we forget about important features or ways of using a...

Following the 10-December-2021 announcement of (CVE-2021-44228), Log4shell scanners have begun to...

So, you are considering migrating your legacy or aging intrusion detection and prevention system...

So, you are considering migrating your legacy or aging intrusion detection and prevention system...

Regular readers of this blog and friends of Stamus Networks will know that we are very closely...

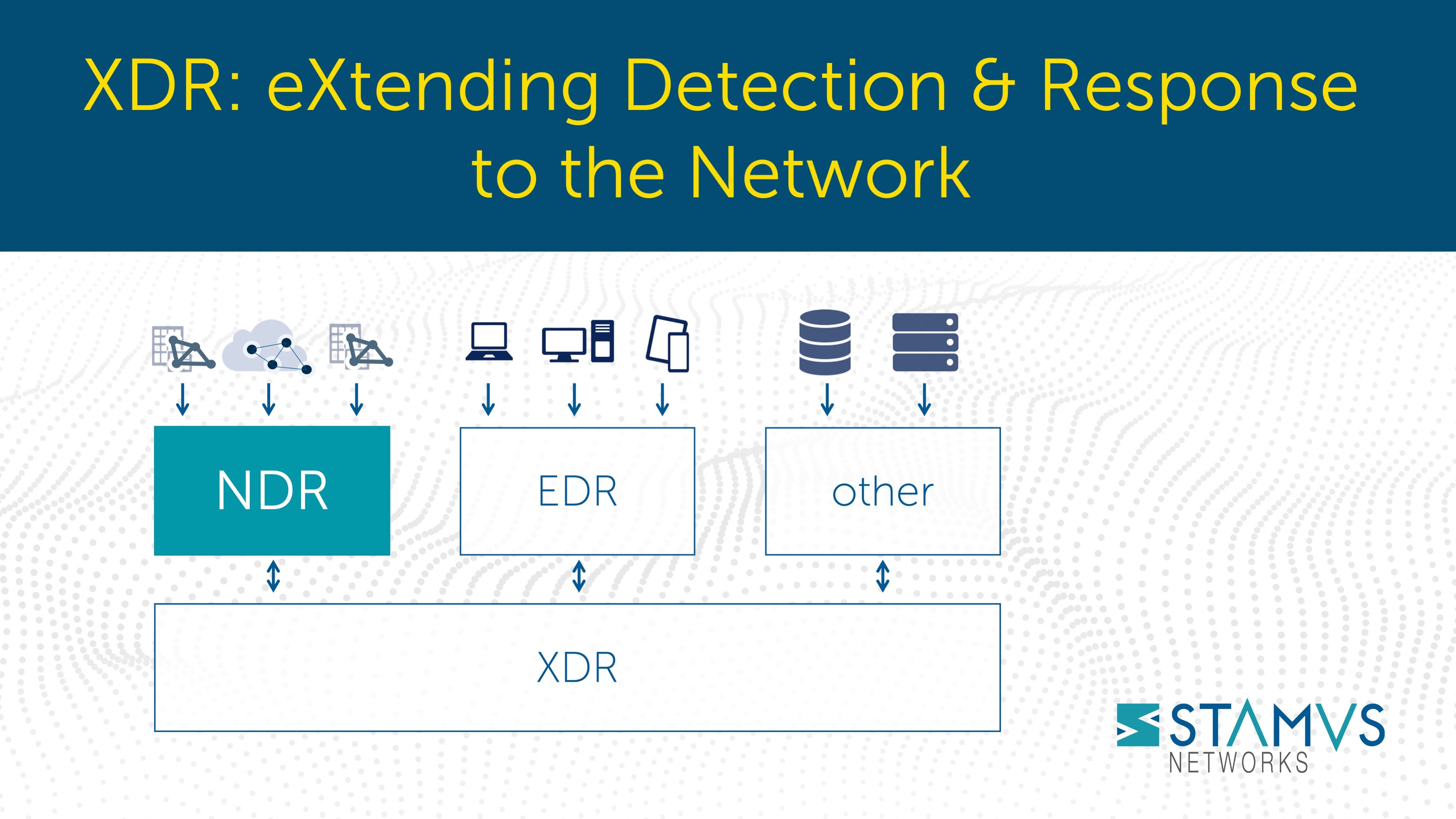

Extended detection and response, or XDR, has generated substantial interest in recent years - and...

On 16 November 2021, my colleague Ed Mohr and I will be giving our second talk entitled “The Case...

Believe it or not, you can launch a turnkey Suricata IDS/IPS/NSM installation – with as few as 4...

The importance of having a strong security team has been growing in recent years, and many...

Next week, Stamus Networks will participate for the first time in SecurityCON, a virtual...

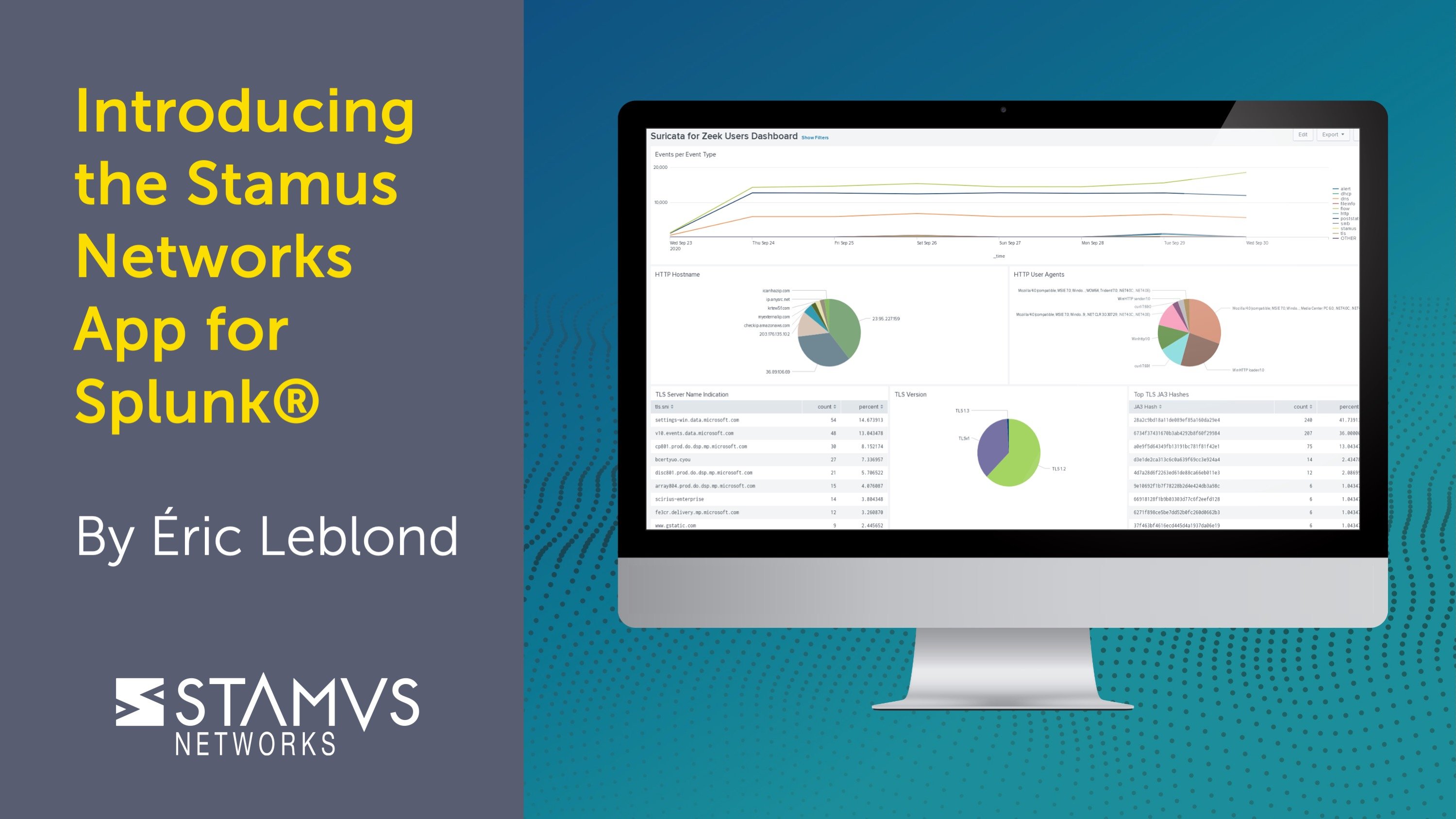

At next week's Suricon 2021, I'll be sharing real world examples of how a new Splunk App can help...

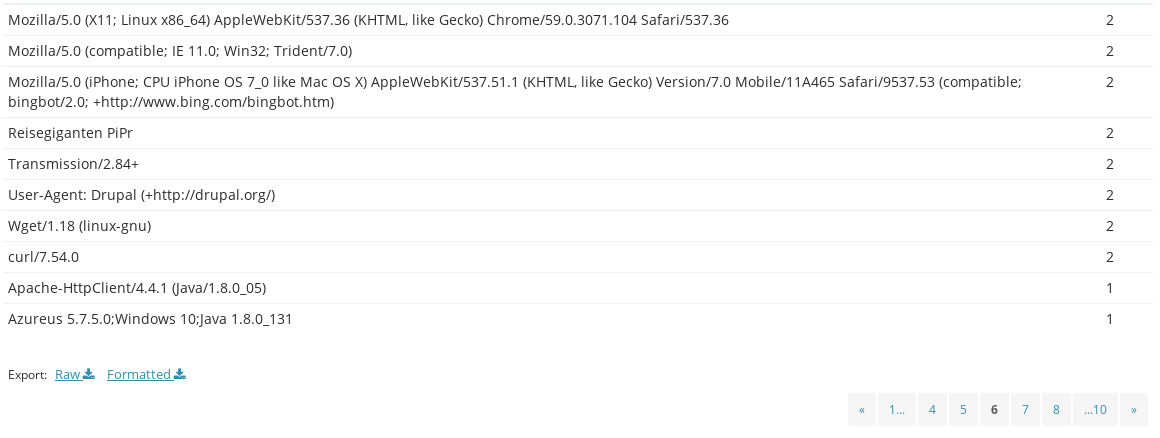

As I mentioned in the introductory article in this series (see here >>), Suricata produces not only...

On 12 October 2021, my colleague Ed Mohr and I will be giving a talk entitled “The Case for...

On 6 October 2021, I’ll be giving a talk entitled “Data Mining TLS Network Traffic.” This is...

Here at Stamus Networks, we are strongly committed to open-source and believe that ease of use has...

When the blue team needs to mount a network defense, they must answer some very common questions:

- ...

In my last blog article, I introduced some of the factors that have contributed to our successes...

Last month, I posted a blog article (Read it here >>) that introduced the new capabilities of our...

In cybersecurity as soon as you stand still, you’re falling behind. Change, whether it’s in the...

Hello and welcome to my first blog article here at Stamus Networks. My name is Phil Owens and I am...

Suricata, the open source intrusion detection (IDS), intrusion prevention (IPS), and network...

Stamus Security Platform (SSP) helps bank identify threat to its accounting network

With the help...

In this series of articles, we explore a set of use cases that we have encountered in real-world...

Recently, Stamus Networks introduced outgoing webhook capabilities to its Stamus Security Platform....

Background

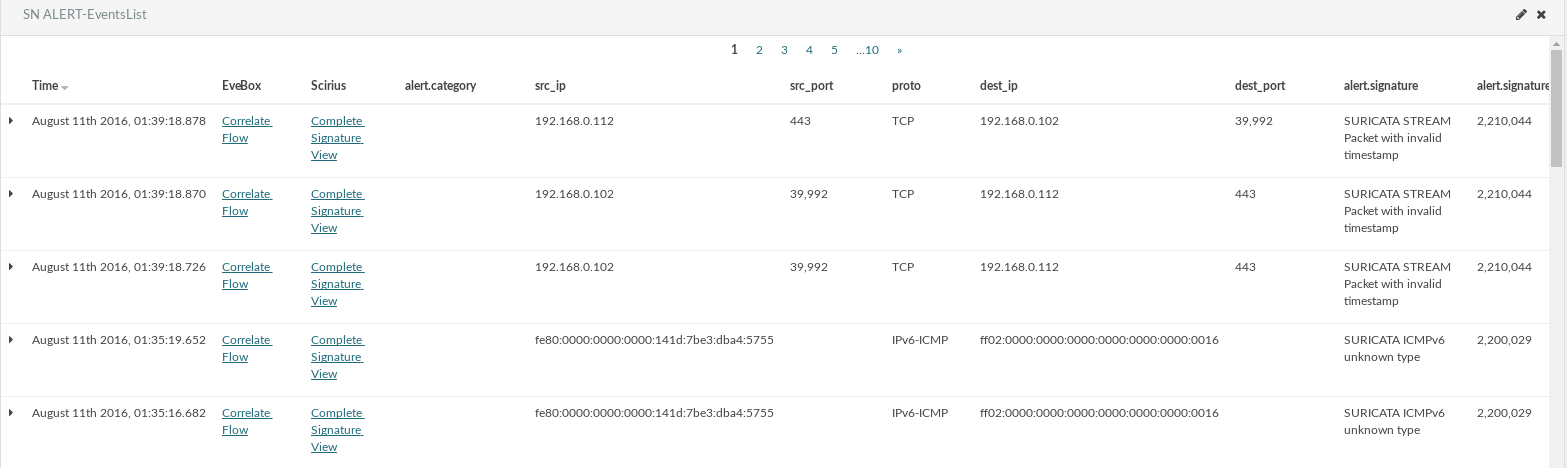

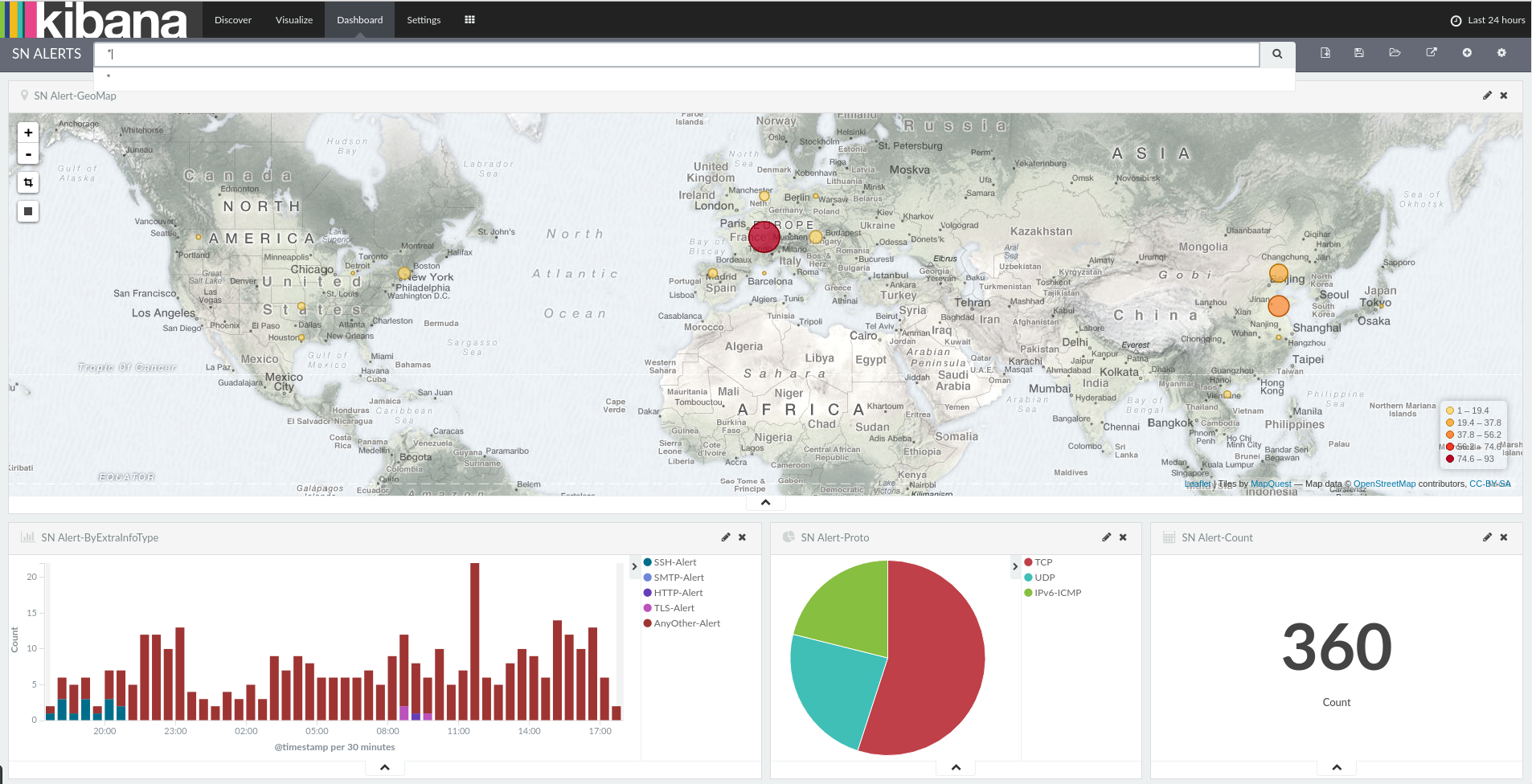

As we have previously written, for all Suricata’s capabilities, building out an...

Background

As we have previously written, for all Suricata’s capabilities, building out an...

Background

As we have previously written, for all Suricata’s capabilities, building out an...

For all Suricata’s capabilities, building out an enterprise-scale deployment of Suricata with...

Exciting news - the OISF just announced that Suricata 6 is now available. This is the culmination...

Cyber security and IT executives today are facing unprecedented challenges: new and increasingly...

Threat hunting—the proactive detection, isolation, and investigation of threats that often evade...

In this series of articles, we will explore a set of use cases that we have encountered in...

Stamus Networks? They are the Suricata company aren’t they? And Suricata? It’s an open source IDS...

As mentioned in an earlier article, organizations seeking to identify cyber threats and mitigate...

Organizations seeking to proactively identify and respond to cyber threats in order to mitigate...

Sometimes the greatest vulnerabilities and risks an organization faces are created by users'...

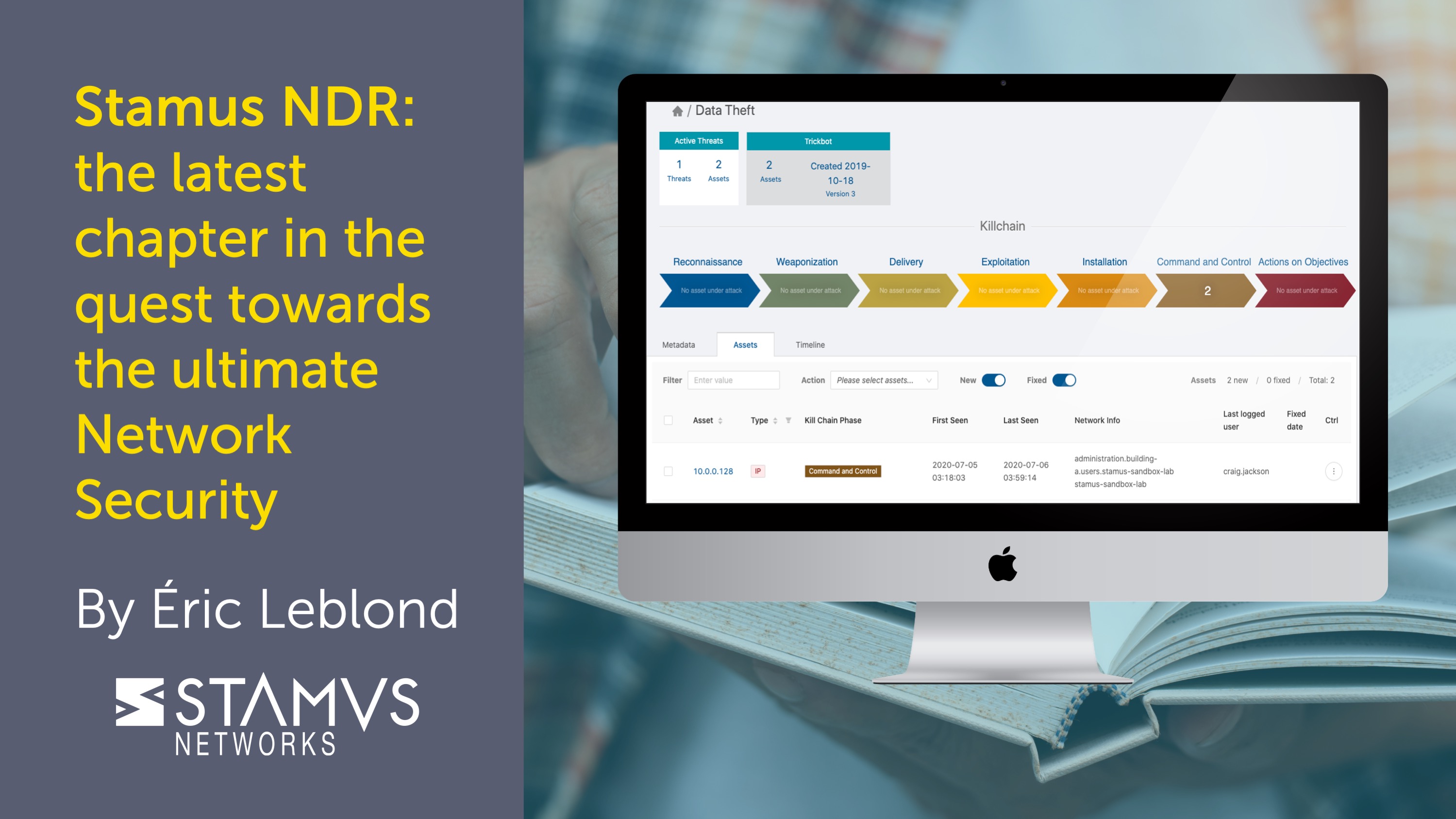

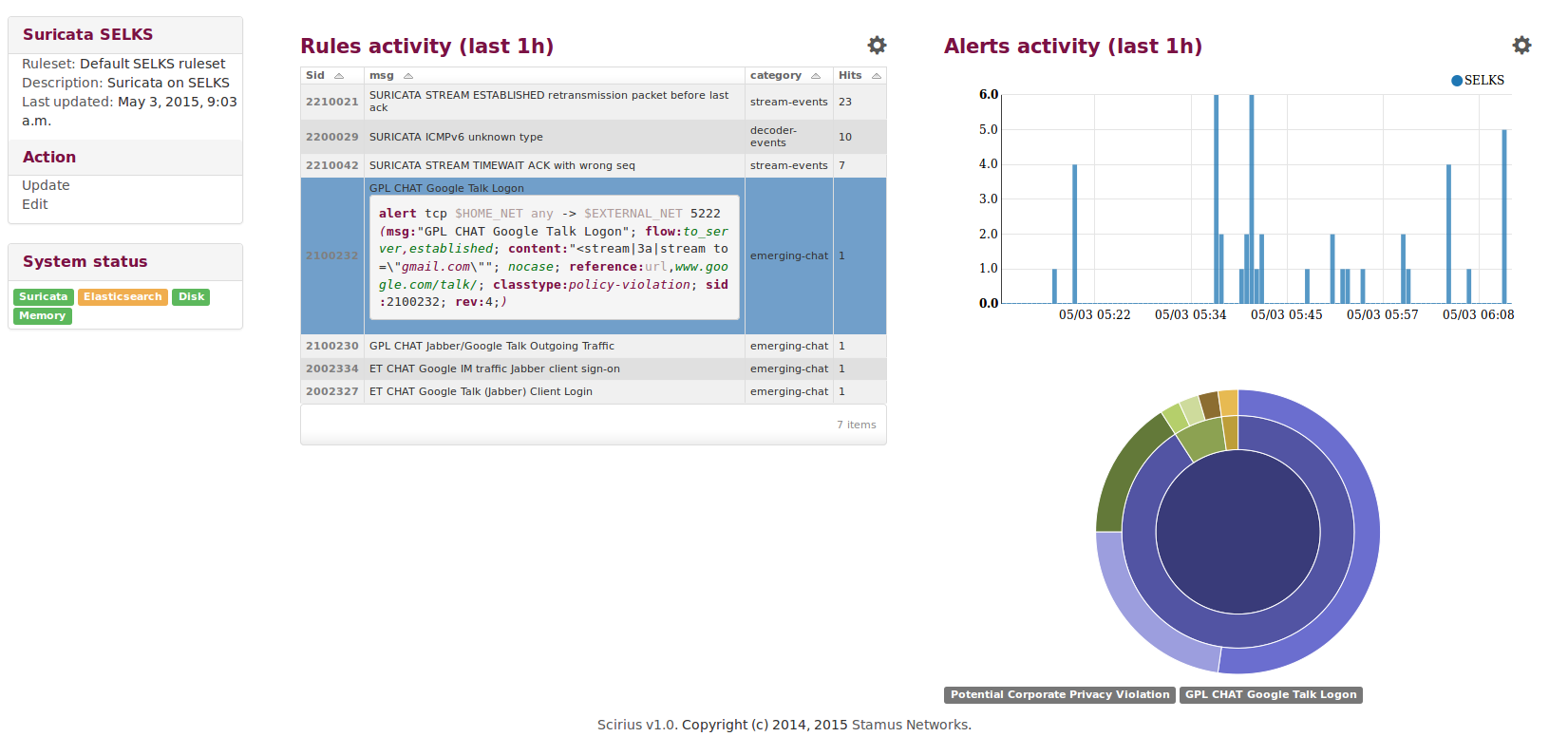

Today we announced the general availability of Scirius Threat Radar (now called Stamus NDR), a...

Every great story begins with the first chapter. And with each new chapter the characters develop...

SELKS 6 is out!

If you are still teleworking, you may wish to test and deploy this new edition to...

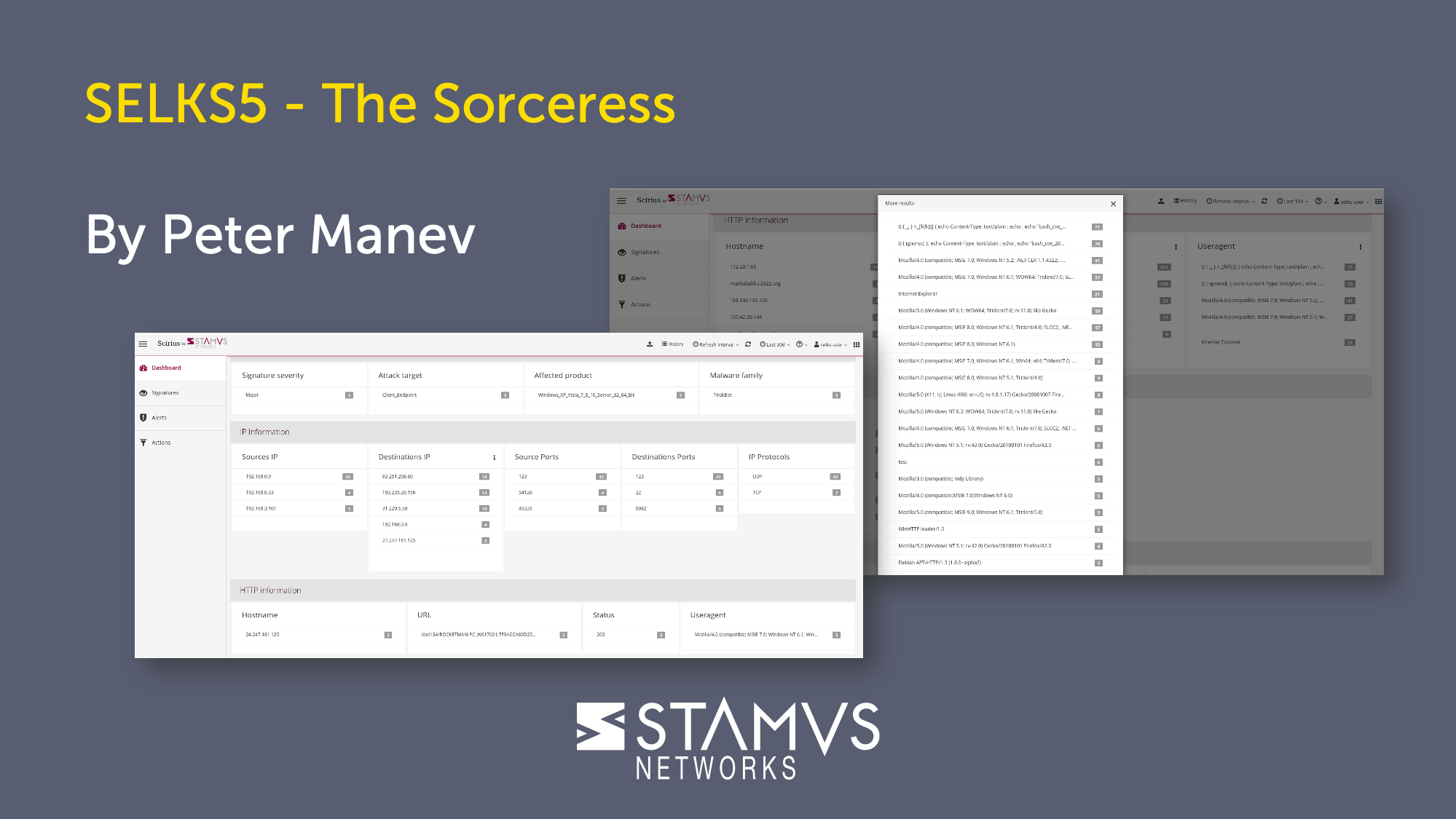

SELKS 5 is out! Thank you to the whole community for your help and feedback! Thank you to all the...

Hi!Yet another upgrade of our SELKS. We are very thankful to all the great Open Source projects and...

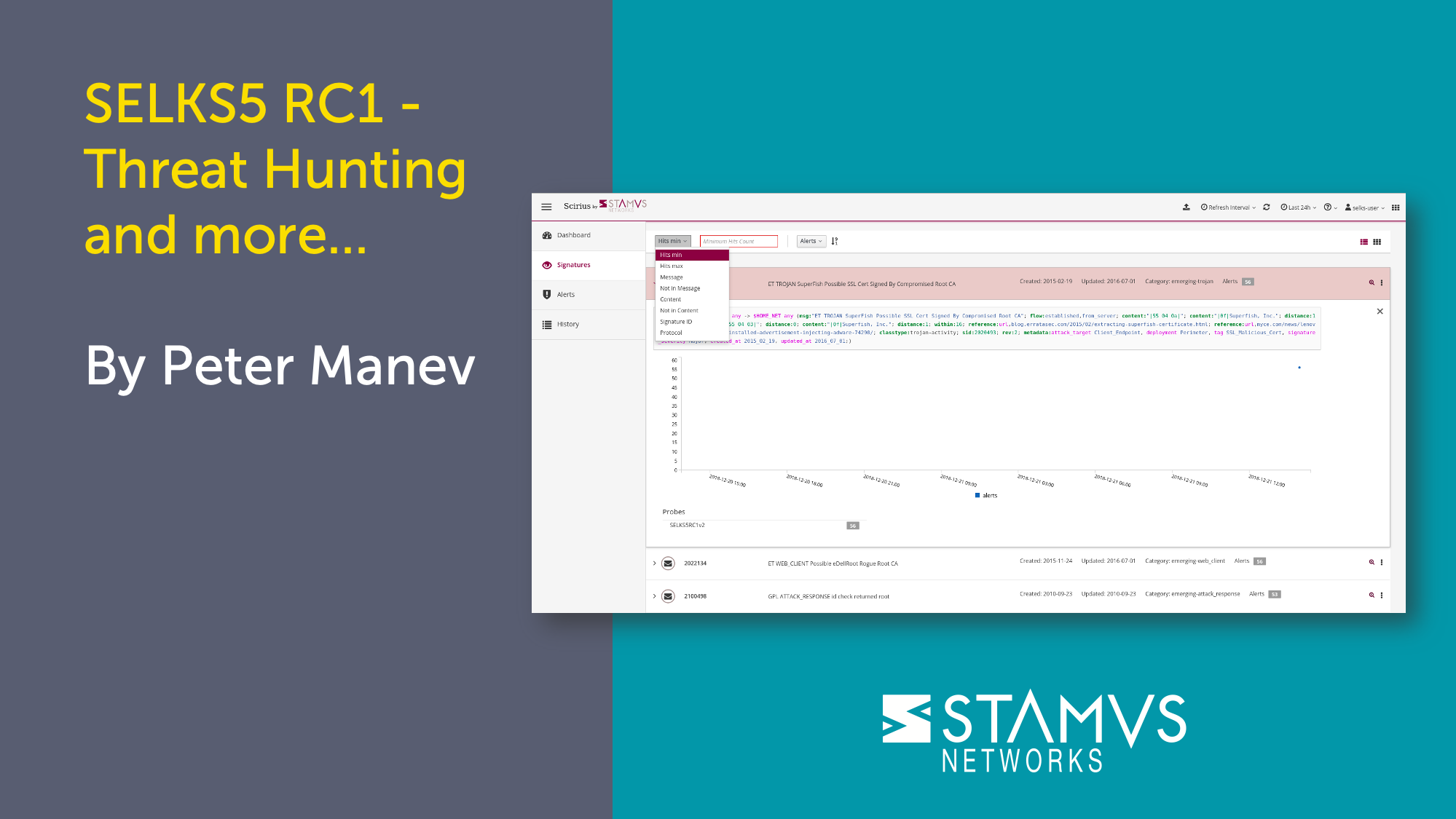

Hey! Our new and upgraded showcase for Suricata has just been released - SELKS5 Beta. Thanks to...

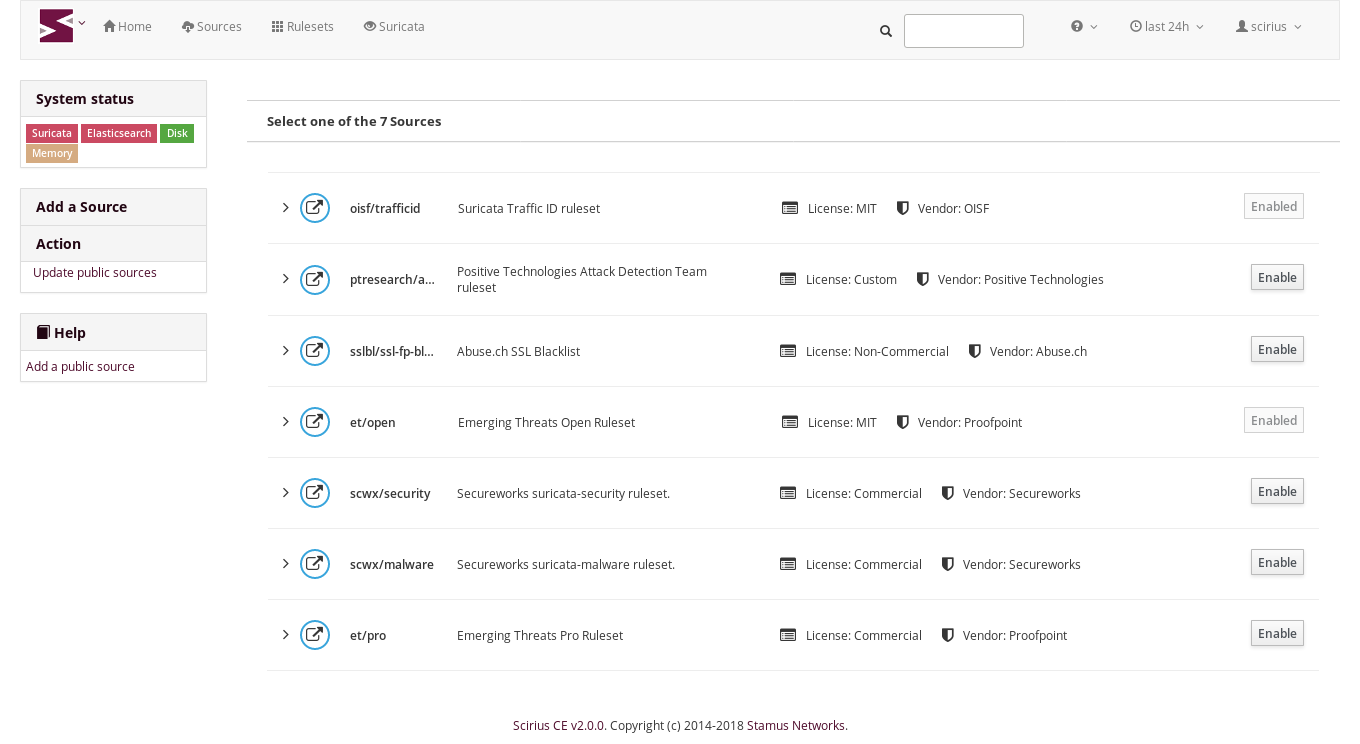

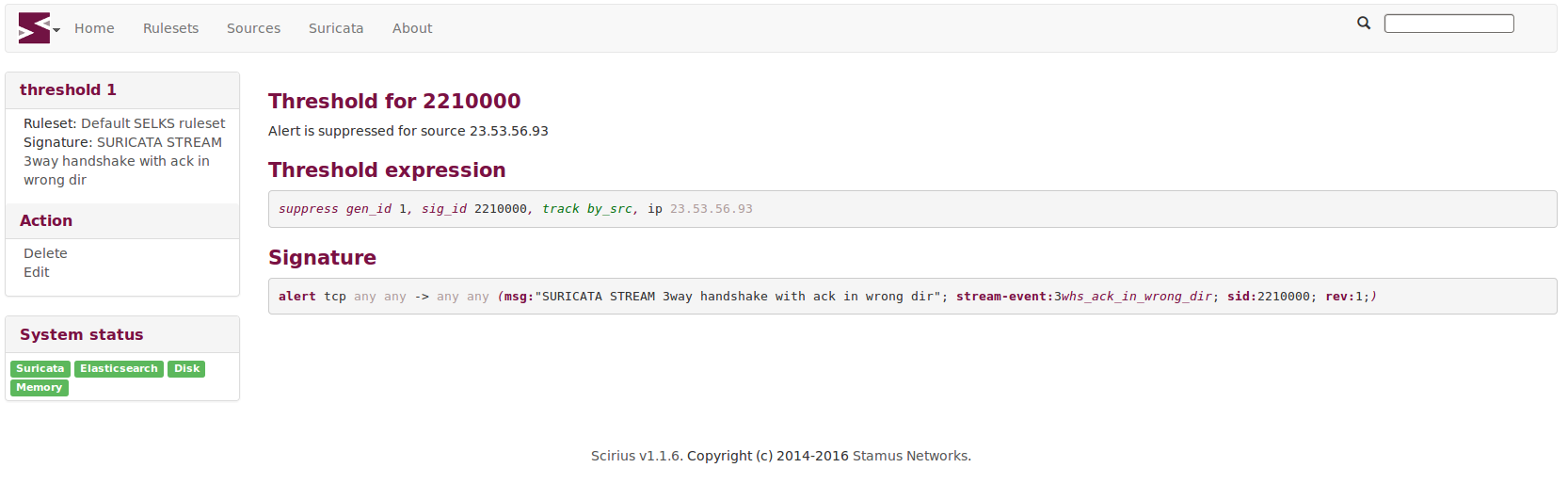

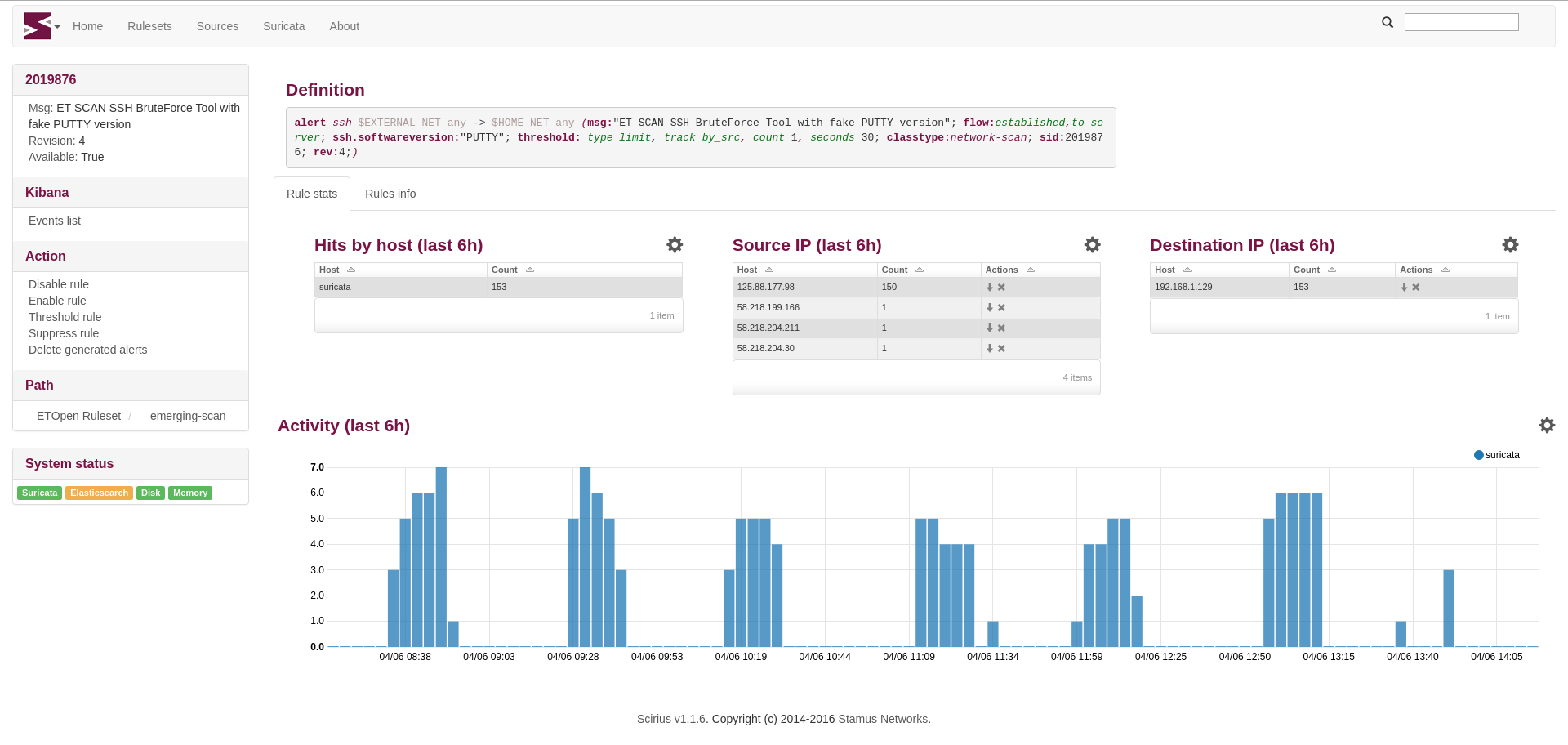

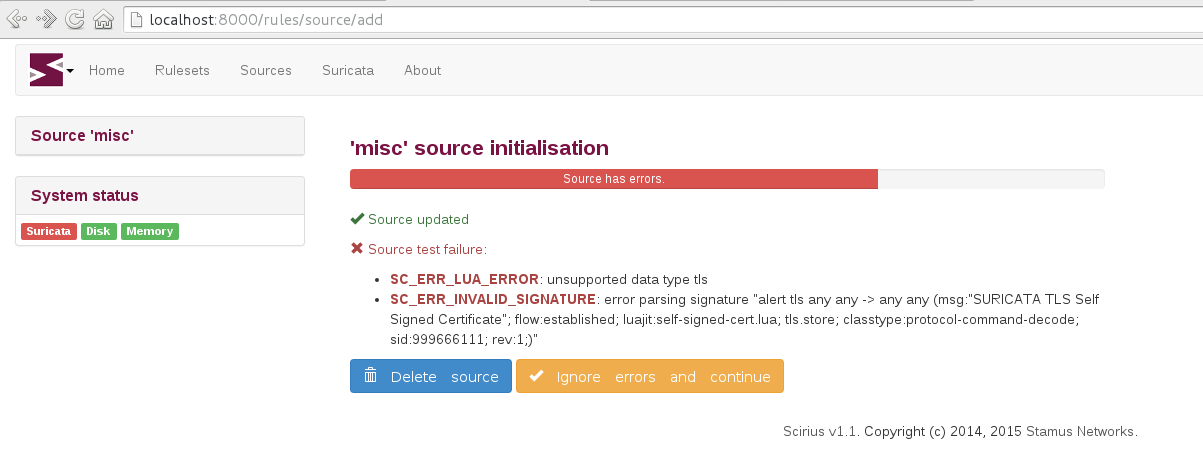

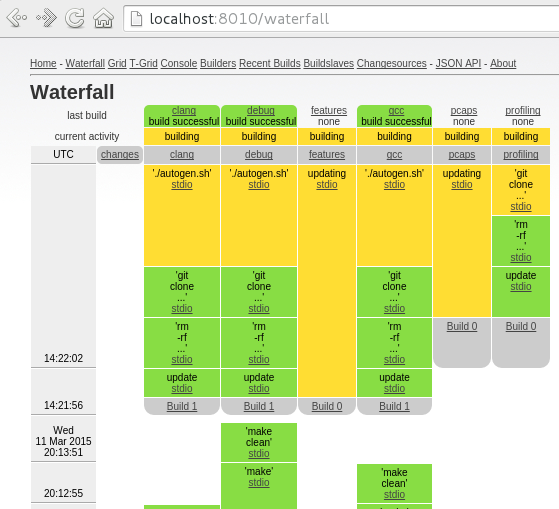

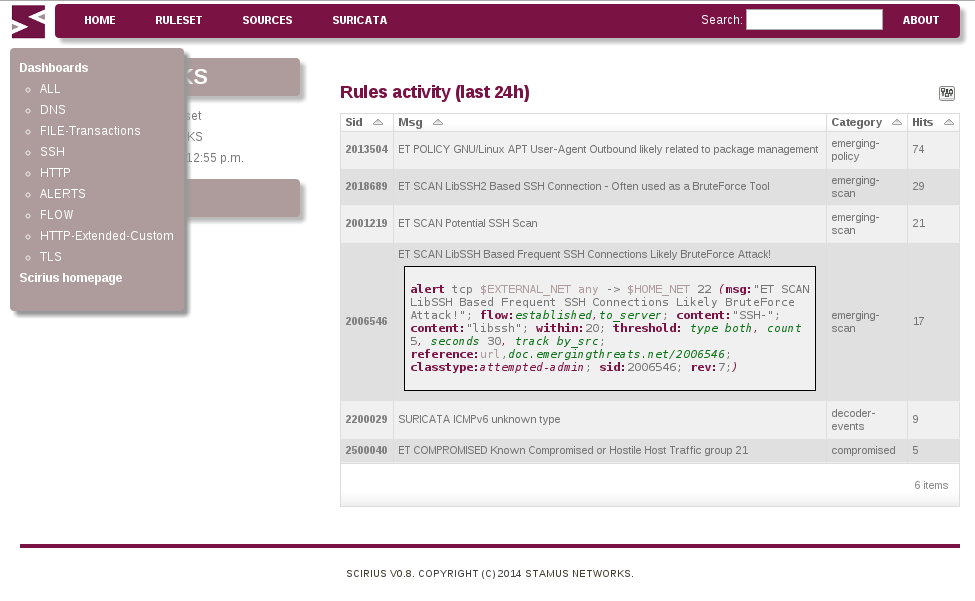

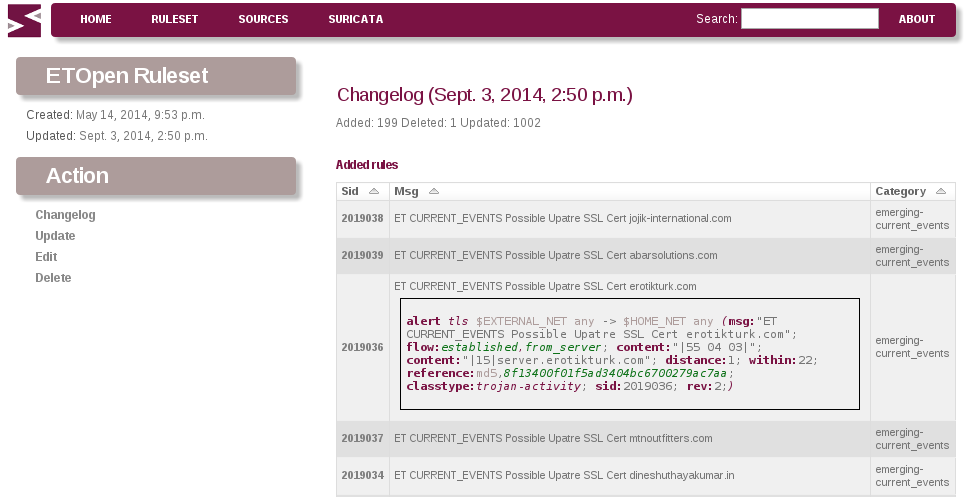

Following the release of Scirius Community Edition 2.0, Stamus Networks is happy to announce the...

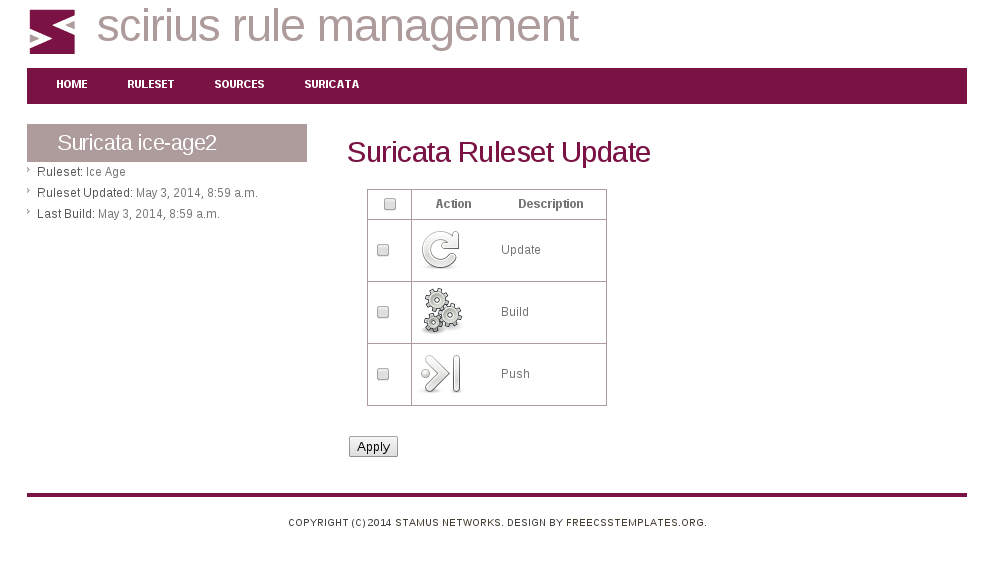

Stamus Networks is proud to announce the availability of Scirius Community Edition 2.0. This is the...

This first edition of SELKS 4 is available from Stamus Networks thanks to a great and helpful...

After a very valuable round of testing and feedback from the community we are pleased to announce...

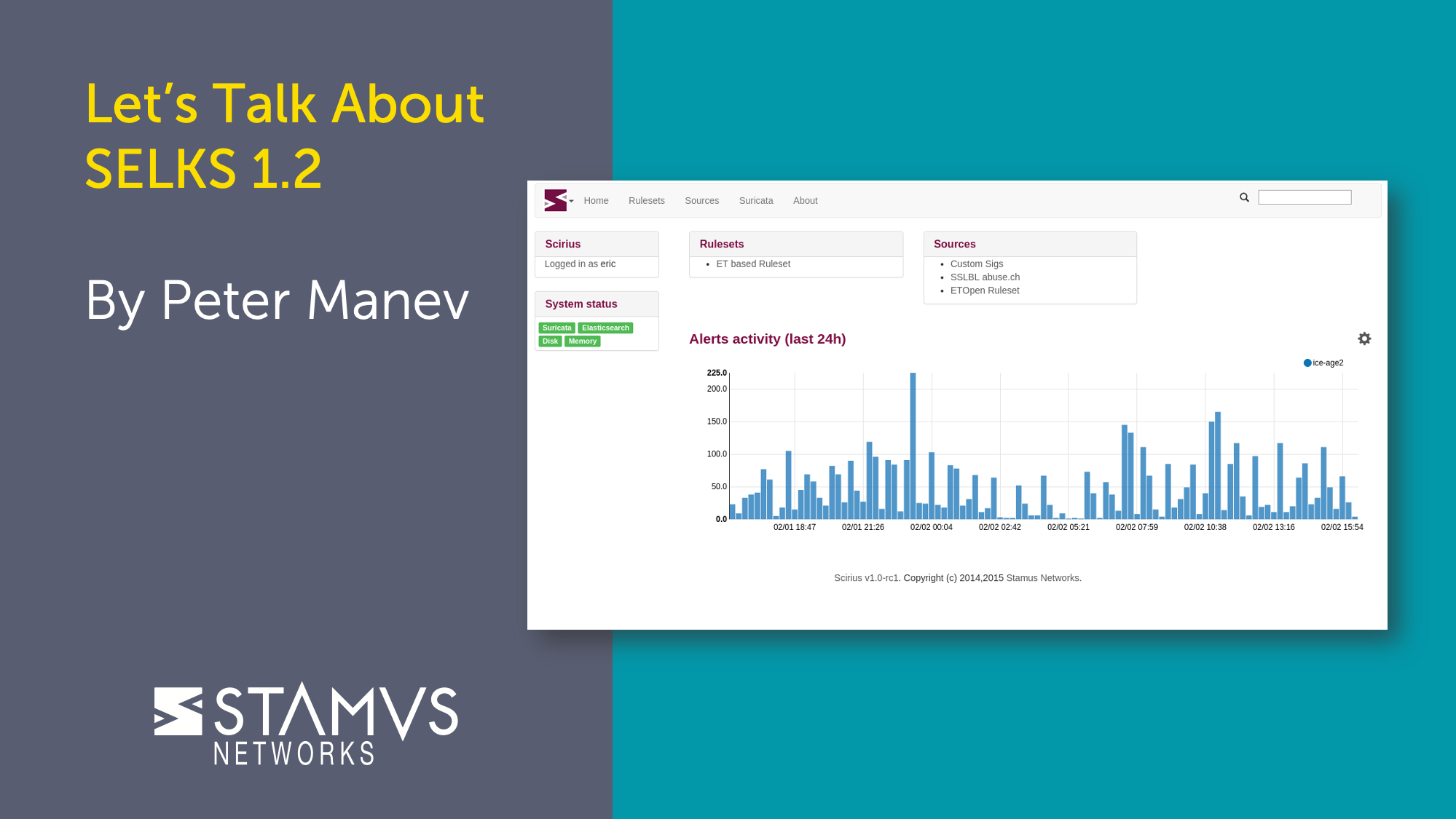

Stamus Networks is proud to announce the availability of Scirius 1.2.0. This release of our...

Yes, we did it: the most awaited SELKS 3.0 is out. This is the first stable release of this new...

Stamus Networks is proud to announce the availability of version 1.0, nicknamed "glace à la...

After some hard team work, Stamus Networks is proud to announce the availability of SELKS 3.0RC1.

Stamus Networks is proud to announce the availability of Scirius 1.1.6. This new release brings...

Stamus Networks is proud to announce the availability of the first technology preview of Amsterdam.

Stamus Networks team is proud to announce the availability of Scirius 1.1. This new release brings...

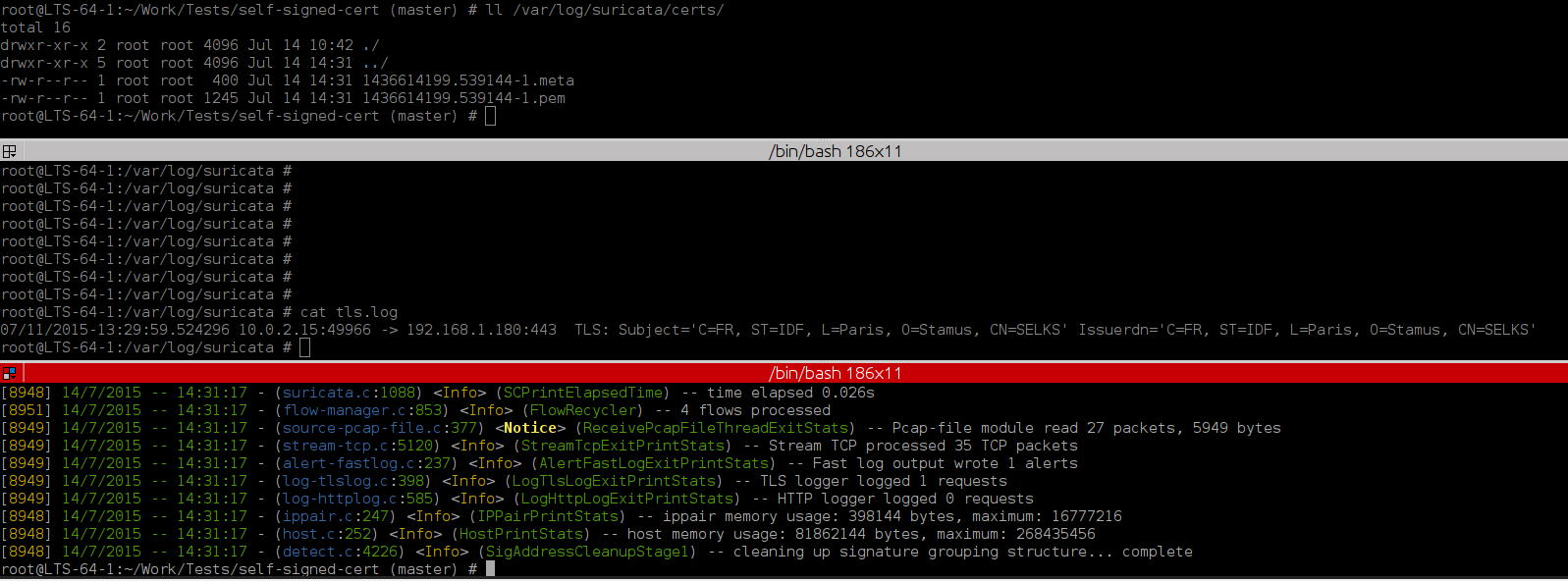

Introduction

This is a short tutorial of how you can find and store to disk a self signed TLS...

Stamus Networks is proud to announce the availability of SELKS 2.0 release.

Stamus Networks is proud to announce the availability of Scirius 1.0. This is the first stable...

Stamus Networks is proud to announce the availability of the third release candidate of Scirius...

Stamus Networks is proud to announce the availability of SELKS 2.0 BETA1 release. With Jessie...

Stamus Networks is proud to announce the availability of the second release candidate of Scirius...

Introduction

Elasticsearch and Kibana are wonderful tools but as all tools you need to know their...

Stamus Networks is proud to announce the availability of SELKS 1.1 stable release. SELKS is both...

Stamus Networks supports its own generic and standard Debian Wheezy 64 bit packaging repositories...

After giving a talk about malware detection and suricata, Eric Leblond gave a lightning talk to...

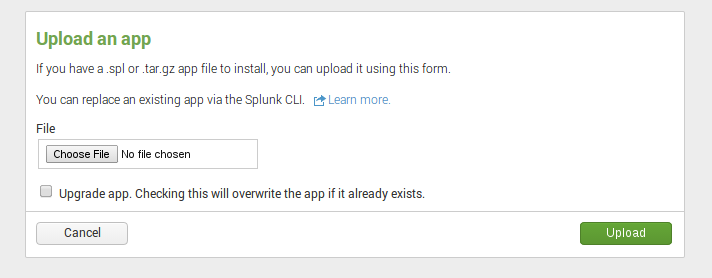

Stamus Networks is proud to announce the availability of SELKS 1.0 stable release. SELKS is both...

Stamus Networks is proud to announce the availability of SELKS 1.0 RC1. This is the first release...

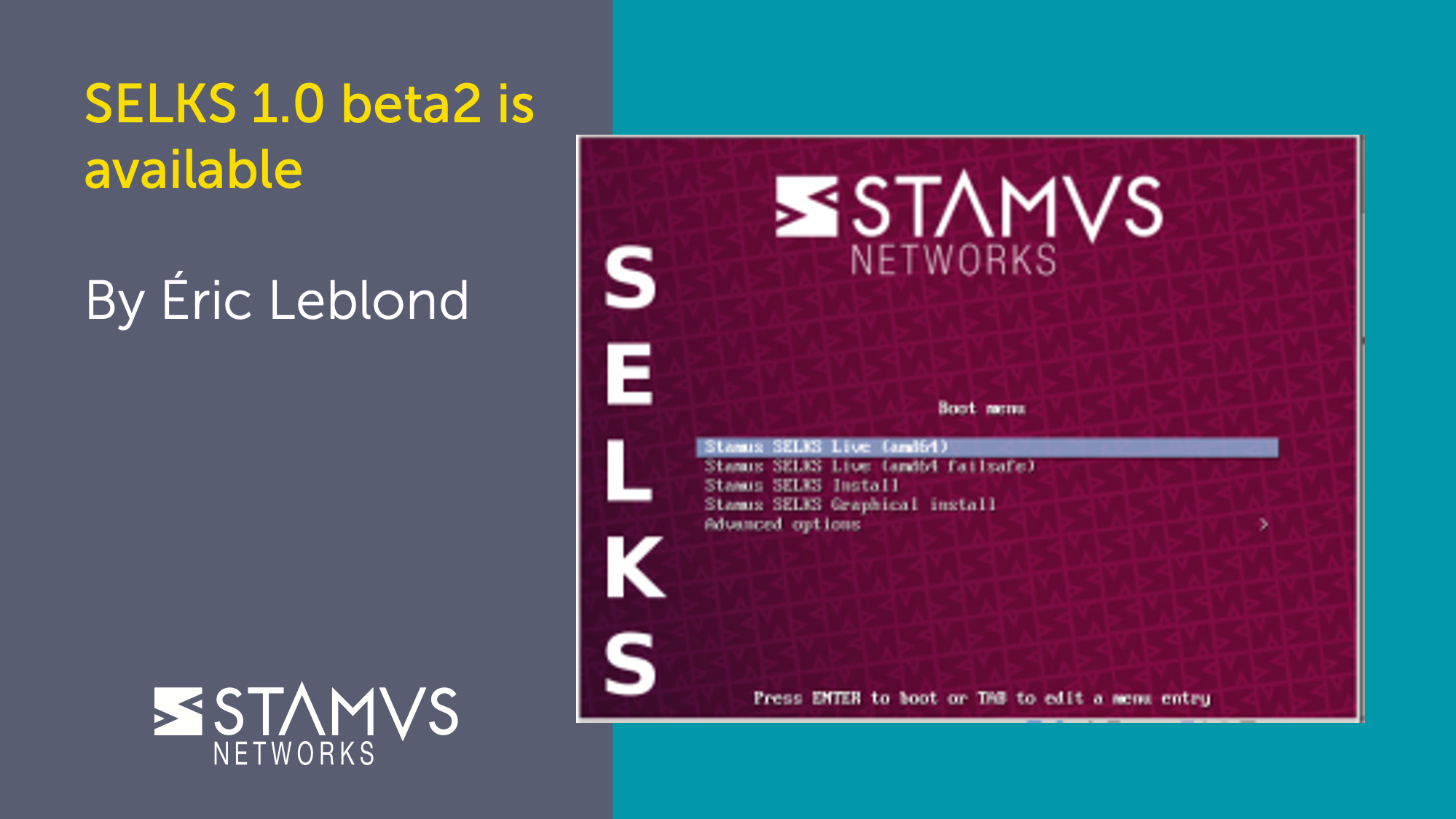

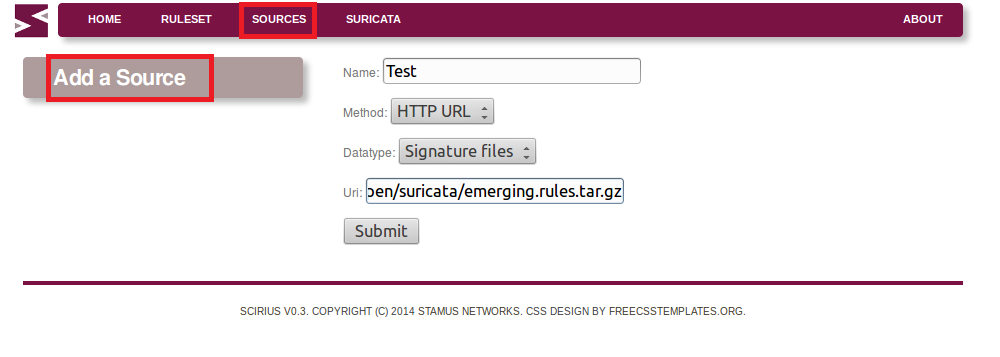

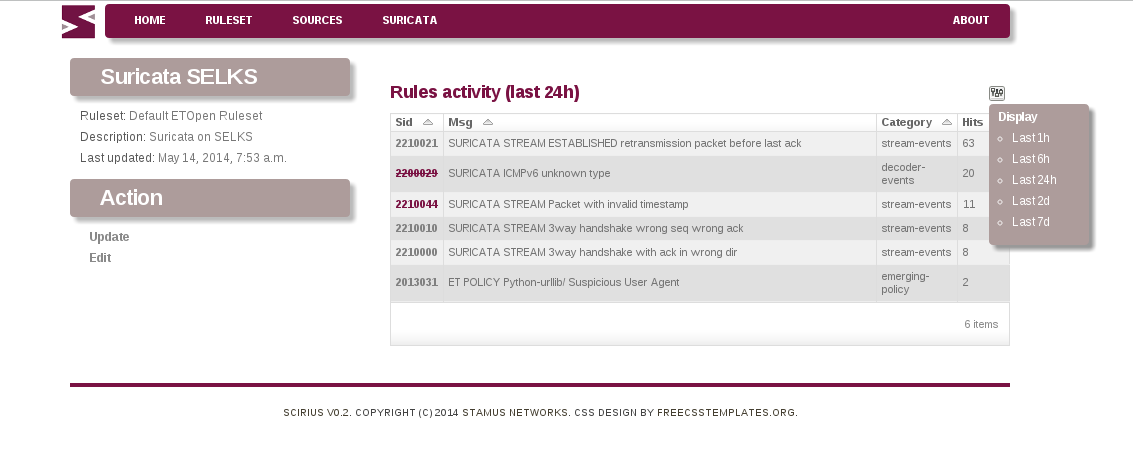

Stamus Networks is proud to announce the availability of the version 0.8 of Scirius, the web...

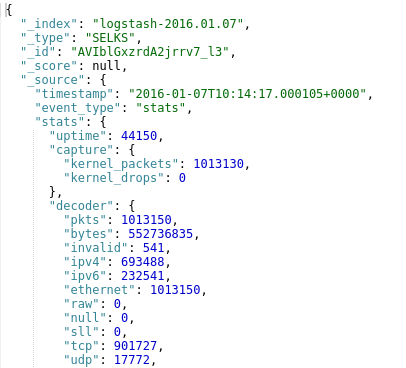

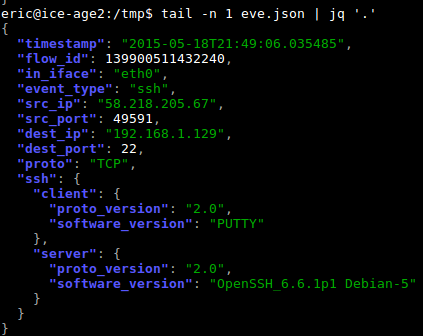

Thanks to the EVE JSON events and alerts format that appear in Suricata 2.0, it is now easy to...

The Ubuntu used in this tutorial:

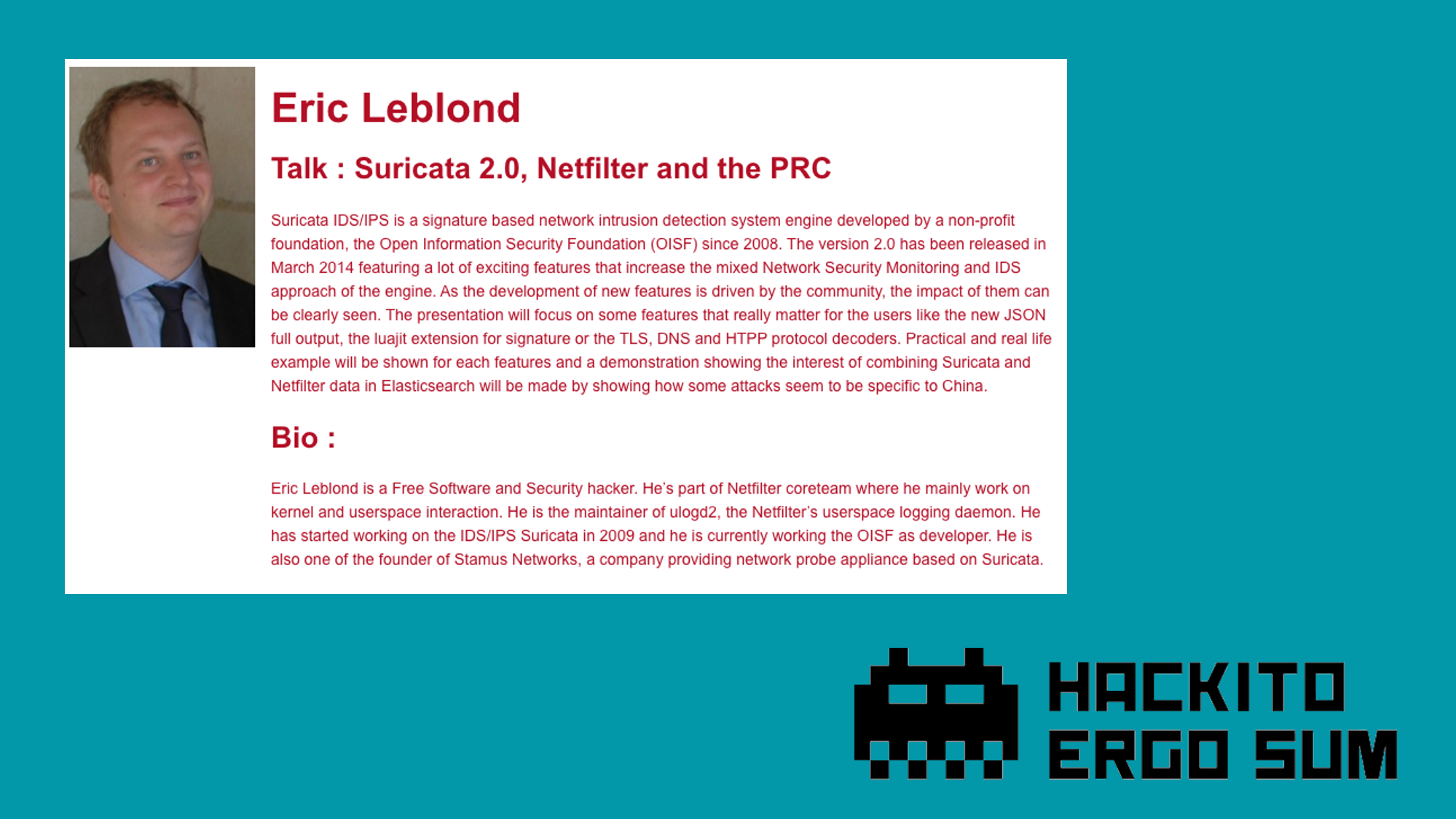

I've given a talk entitled "Suricata 2.0, Netfilter and the PRC" at the Hackito Ergo Sum conference.

This is the first blog post on Stamus Networks technical blog. You will find here posts focused on...